Simulcast is a core WebRTC technique used by clients to encode a single video source into multiple streams with different resolutions and bitrates simultaneously. These distinct streams are transmitted to the SFU (Selective Forwarding Unit), which dynamically routes the most appropriate stream to each participant based on their specific network conditions, desired layout and device capabilities. By offloading stream selection to the server, simulcast enhances flexibility and scalability in multi-party video conferencing.

💡 See also: Simulcast WebRTC in the WebRTC context and to get a fuller picture about this technology.

Simulcast is closely related to SVC, where a single encoded video stream can be layered and each participant receives only the layers that he can process.

What is simulcast

At its core, simulcast is a technique used in WebRTC in which the WebRTC client device takes a given video source, usually a webcam, encodes it multiple times simultaneously, generating several different video streams, each with its own bitrate and resolution.

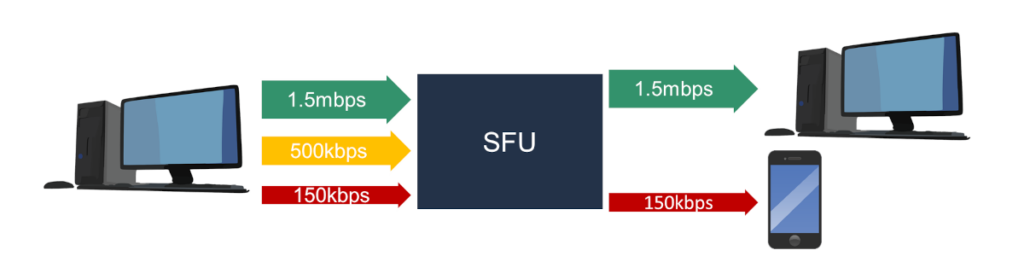

All of the generated simulcast video streams are then sent to a WebRTC media server, usually referred to as an SFU. The media server then decides how to route the received streams – which participants in the session need to receive which of the simulcast variants. That decision is based on many different parameters which are application specific in nature.

How simulcast works in WebRTC

In a simulcast scenario, the originating device, which is broadcasting data, sends multiple media streams towards what is known as the Selective Forwarding Unit (SFU). This means that each device will encode and transmit several media streams, each with varying levels of quality.

Typically, there are two or three streams sent to the server, each with a different bitrate and consequently, different resolutions, frame rates, and overall quality.

For the client device, this has an added cost in both CPU (the need to encode multiple separate streams) and network (the need to send to the SFU multiple separate streams).

For the SFU media server, this gives flexibility and options in which stream to send to which participant device. That decision is usually based on several different parameters:

- The bandwidth available on the downlink to the viewer, decided through bandwidth estimation

- The CPU load on the viewer’s device

- Display window on the viewer’s device (you don’t want to waste resources and send a 1080p resolution video stream just to get it displayed on a tiny QVGA window)

- Prioritization versus other video streams sent to the viewer’s device

With certain video codecs, where temporal scalability is available, it can be used with conjunction to simulcast to gain extra flexibility and virtual bitrate targets: a 30fps bitstream sent to the SFU is in effect 2 potential bitstreams – the original 30fps one and a reduced 15fps one.

The role of the SFU

The SFU, or media server, plays a crucial role in the simulcast process:

The SFU receives from the various participants in a meeting their video streams. Each participant can send multiple streams – these streams have the same content in different bitrates (=quality). The SFU is now responsible for deciding which stream to send to each participant in the call.

The SFU might choose to send the highest bitrate stream, which in our example could be 1.5 megabits per second, offering the best stream quality available. Alternatively, it could opt to send a lower quality stream if that’s more appropriate.

In many ways, the SFU is the brains of the WebRTC session, making important decisions that directly affect the perceived media quality and user experience of the session.

Simulcast vs SVC

Both simulcast and SVC (Scalable Video Coding) solve the same problem in WebRTC: how to send video to multiple participants who have different network speeds or hardware capabilities without forcing the strongest connection to drop to the level of the weakest and without forcing the use of transcoding in the infrastructure side.

When SVC is the chosen pattern, the SVC scalability mode set is how it gets configured. Simulcast and SVC can also be combined: a sender configures a temporal-only SVC mode like L1T3 per encoding while running simulcast across separate SSRCs.

The core difference between SVC and simulcast?

- Simulcast is essentially a “brute force” approach where the sender’s browser encodes and transmits multiple completely independent versions of the same video stream (e.g., 1080p, 720p, and 360p) simultaneously. The SFU then simply picks which version to forward to each participant

- SVC is a more elegant, layered approach. It sends a single stream composed of a “base layer” (low quality) and several “enhancement layers”. If a viewer has a great connection, the SFU sends all layers; if their connection drops, the SFU just stops sending some or all of the enhancement layers, leaving the base layer intact

While SVC is technically superior and more bandwidth-efficient, Simulcast remains the industry workhorse because it works reliably across almost all browsers and devices using the standard H.264 codec as well as all other video codecs.

Below is a quick comparison table between simulcast and SVC:

| Feature | Simulcast | SVC (Scalable Video Coding) |

|---|---|---|

| Stream structure | Multiple independent streams at different bitrates | One multi-layered stream |

| CPU usage (sender) | High: The device must encode the video 2 or 3 separate times | Medium/High: Encoding layers is complex, but often more efficient than multiple encodes |

| Bandwidth (sender) | Higher: Sending redundant data across different streams | Lower: More efficient as layers build upon each other rather than duplicating data |

| Support | Broadly supported across all browsers and video codecs | Growing but limited (largely dependent on VP9 or AV1 codecs) |

| SFU Complexity | Lower: The server just acts as a traffic cop, switching between streams | Higher: The server must understand the layer dependency to drop packets correctly |

It is also important to note that in many cases, SVC is not implemented by hardware acceleration available on devices. The same devices may support regular encoding of the same video codecs and can often be used for encoding using simulcast.

When to use simulcast

Simulcast is your best friend when you are building WebRTC services that support multiple participants. That’s because the asymmetric nature of the various participant devices and their networks, along with potential different layouts each participant is using increase the importance of having multiple potential bitrates per video stream used.

Such scenarios include:

- Multi-party video conferencing applications

- Live streaming and broadcasting services

If you were to send a single high-definition stream to a room of ten people, and one person joined via a spotty 3G connection, the entire conference might “lag” or downscale for everyone to accommodate that one weak link. Simulcast prevents this “lowest common denominator” problem.

That said, when using simulcast, there are times when some of the simulcast streams sent to the SFU are not routed to any participant. In such cases, a sensible optimization might be to disable the specific simulcast stream temporarily and not have it sent to the SFU in the first place. This can save precious bandwidth and CPU resources from both the client device and the SFU server.

When NOT to use simulcast

In 1:1 calls when there are only two participants, simulcast isn’t advisable. It won’t improve quality – it will do the exact opposite. Since in a 1:1 session, the receiving side needs only a single video stream it is better to just encode and send that single video stream to begin with.

It is also important to remember that the use of simulcast increases the CPU use and network requirements on the uplink of the sender’s device. In low end devices and networks, it might be better to not use simulcast at all, depending on the exact scenario and requirements.

Decision-making in simulcast

When using simulcast, there are quite a few decisions that need to be taken. Some in advance and some dynamically as the session progresses.

On the SFU

The criteria the SFU uses to make these decisions can vary greatly depending on the implementation. Factors such as the available bitrate on both the sender and receiver ends, the capabilities of the devices involved, the current network quality, the layout on the screen, what is being shown, and the priorities set within the application all play a part in this decision-making process.

An important part of this work is handling BWA – Bandwidth Allocation.

On the client

Which bitrates to allocate for each simulcast video stream will dictate the quality provided on that video stream as well as its resolution and frame rate. Keeping resolutions doubling from one stream to the next increases the chance of reducing encoder CPU load.

When streams aren’t displayed to any other participant, they can be disabled on the client side and not send (or even encode them) altogether.

Learn more about simulcast

Simulcast is a complex but fascinating feature of WebRTC that caters to the dynamic needs of multi-party communication. For those interested in diving deeper into this topic or other terms related to WebRTC, resources like our WebRTC Glossary offer a wealth of information.

Additionally, for a more structured learning path, courses are available that can provide further insight into simulcast and other advanced WebRTC functionalities.

Simulcast FAQ

**What is the difference between simulcast and SVC?**

Simulcast and SVC solve the same problem but do so in slightly different ways.

Simulcast uses multiple distinct video streams whereas SVC uses a single video stream that is built out of layers – you can peel off layers, reducing bitrate and media quality with each peeled layer – but still have a decodable and usable video stream.

**Do I need to use simulcast in 1:1 calls?**

No. It 1:1 calls simulcast is not advisable at all.

Using simulcast in 1:1 just increases CPU and network requirements and adds no media quality or any other advantage for that matter.

**Does Chrome support simulcast?**

Yes.

Chrome, as well as all other modern web browsers today support simulcast.

**How does simulcast reduce bandwidth?**

Simulcast doesn’t directly reduce bitrate. It enables media servers to offer better media quality and user experience to WebRTC sessions without increasing the CPU requirements on the infrastructure. This leads to better scalability of SFUs and services at reasonable costs.

The bandwidth reduction associated with simulcast is that of being able to send the specific video stream to the participants, hence making sure that bandwidth used by the system is put to good use of improving the user experience for the participants.