Security is... complex. Even with WebRTC.

I've always been one to praise the security measures placed in WebRTC.

While WebRTC is a secure protocol by nature, it seems that browsers take different approaches to who needs to take responsibility of any additional means of security.

The gist of it:

- WebRTC is secure by default

- Whenever a developer's mistake can be thwarted by tweaking WebRTC - it gets tweaked

- Whenever a security hole is found, it gets fixed and deployed by the browser vendors faster than most other companies in the industry can even perceive the notion of a threat

Seriously - what's not to like?

Recently though, I started thinking about it. How do browser vendors think about security? How much do they take it upon themselves to be the guardians of their users? His trusted guide in the big bad world that is the Internet?

Which brings me to the big one -

Are browser vendors responsible to the actions of their users when it comes to WebRTC?

It seems that they have different approaches and concepts to this one.

Google Chrome

Moto: Users are stupid and should be protected

That's how I'd put their mindset to words.

getUserMedia

Chrome has long been one to clamp down on where and when can WebRTC be used.

They started off with voice and video working on HTTP and HTTPS, while HTTP access granting to the camera and microphone were forgotten, and required a user's approval each and every time.

They shifted towards HTTPS only. You can't access the microphone or the camera in an HTTP page.

Persistence

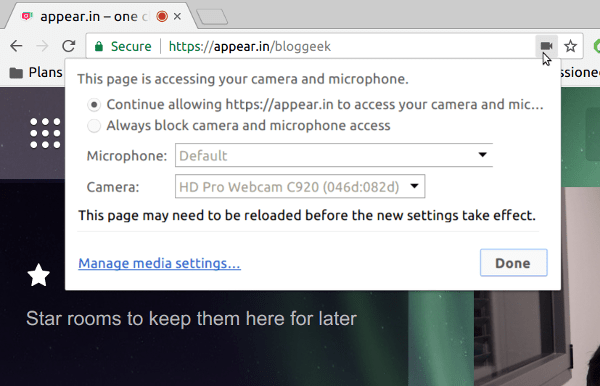

The decision a user made is persistent. If you granted a domain access to your microphone or camera - Chrome remembers it - for eternity. Your only way of revoking that is by clicking the camera icon on the address bar (if you can even notice it):

Oh, and for persistency - Chrome offers you two choices:

- Ask when there's a need (and Chrome will remember the answer for that domain for you)

- Never ever share your device

No middle-ground here.

Screen sharing

You can share your screen with Chrome.

But it will ask the user each time for his permission.

And to enable screen sharing, you will first need to create a Chrome Extension for your web app and have the user install it. Not a biggie, but a hurdle.

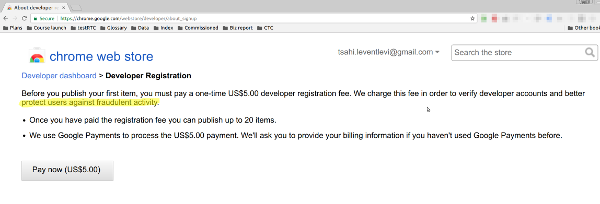

Now, to publish a Chrome Extension on the Chrome Web Store, you'll need to pay a small $5 fee.

Why? Fraud - obviously:

You see, screen sharing is considered by Google (and most other browsers) as more of a security threat than camera and microphone access.

By forcing the Chrome Extension, Google raises the bar against abuse, and can theoretically remove any abusive accounts and extensions with better tracability to their source.

The only real downside of it? I have over 10 icons on my toolbar now in Chrome, and most of them are for screen sharing on different services. Once a move I remove a few of them to declutter my browser. Yuck.

One minor nitpick here - Google Hangouts and Google Meet are both exempt from the need of an extension. That's probably what happens when you live inside the mothership.

Mozilla Firefox

Moto: Users are intelligent

Maybe. But not all of humanity. Or even the billion or two that use browsers.

getUserMedia

In Firefox, getUserMedia will work in HTTP.

Not sure if persistence can be configured for Firefox for HTTP websites. I guess it is akin to herd immunity in vaccination. Since Chrome is THE browser, developers make sure their WebRTC service works on Chrome (lets call it Chrome first?) so their service starts by running only on HTTPS anyway.

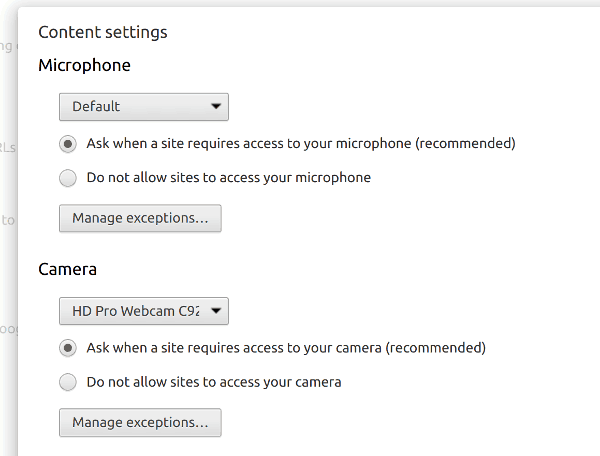

Persistence

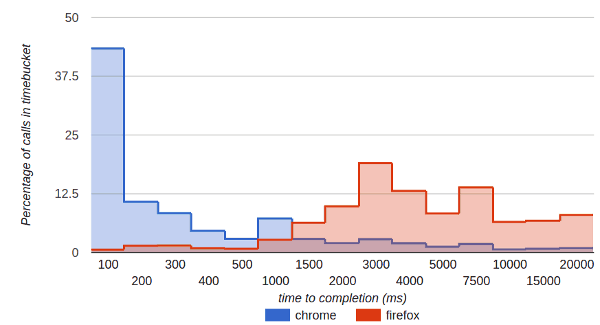

Anyways, Philipp Hancke wrote a great post about getUserMedia and timing with browsers. Here's how timing looks for appear.in from the moment getUserMedia is called and until it is completed:

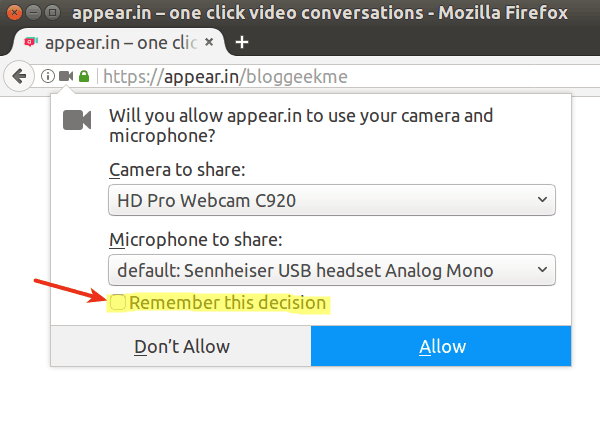

Firefox tend to take longer to complete its getUserMedia calls. Philipp attributes it to this little UI design in Firefox:

In Firefox, if you want to decision (allow/disallow) to be persisted, you need to opt in for it. And for appear.in, most people don't opt in.

This is great, especially for the Don't Allow option (it is quite a hassle to remove that restriction from Chrome once you decided not to allow such access in a session).

Screen sharing

For screen sharing, Firefox used to have a whitelist of domains you had to register on to get screen sharing to work.

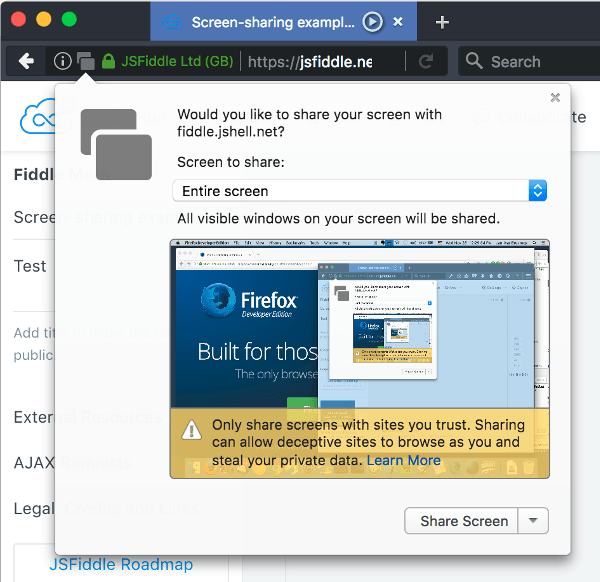

From Firefox 52, this restriction has been removed. Mozilla wrote a post about it, explaining their millions of users around the world about the dangers.

I am not sure about you, but I've learned early on as a developer catering to developers that other developers are stupid (if you are a developer, then I am sorry, but bear with me - and read this one while you're at it). So when I wrote code for developers, I made sure that if they screw things up, we crash spectacularly. The reasoning was, the sooner we crash the faster our customers (who are developers) will fix their bugs - and do that during development - so they won't get into deadlocks or weird crashes in production that are way harder to find. These were the good old days of C programming.

Now... if developers are stupid, then what would mere users do about their understanding of security and threats?

In Firefox, they need to read and understand that yellowish warning when all they want to do is share their screen now - after all - people are waiting for them to do so in the session already.

With such a warning... I am not sure I am going to be in a trusting mood no matter the site.

While I mostly prefer Firefox approach for getUserMedia permissions, I think Chrome does a better job at it with the extensions mechanism.

Microsoft Edge

Microsoft Edge has started to support WebRTC (finally).

While I a, in the process of installing my Creators update (where I am promised proper support for WebRTC), this will take more time than I have to get some nice screenshots of what Edge is doing.

So I asked Philipp Hancke (like I do about these things).

Here's what I got:

- Edge enable persistence for getUserMedia

- It has a model similar to Firefox - you need to opt-in for persistency

- It doesn't support screen sharing yet

Are Browser Vendors Responsible for Our WebRTC Actions?

Yes they are.

In the same approach that browser vendors are taking in HTTPS everywhere, removing Flash from the web, protecting against known phishing sites, etc; they need to also protect users from the abuse of WebRTC.

The first step is by not allowing developers to do stupid (by forcing encryption and DTLS-SRTP for example). The second one and just as important is by not allowing users to do stupid.