A quiet crisis is underway: AI is gobbling up all the world’s memory, pushing prices up 6X and forcing WebRTC and Voice AI to rethink how they operate.

Memory. Nobody thinks about memory in WebRTC applications. At least not at first. Storage? Even less. A conversation I had with my “PC guy” caused me to rethink this, especially now in 2026. Especially when prices are going up.

I tried going through this rabbit hole, to see where it gets me with WebRTC.

Key Takeaways: TL;DR

- AI is driving memory prices up significantly, impacting WebRTC and Voice AI functionality

- Memory prices have surged 3-6X in 2026 due to AI companies purchasing most available production capacity

- WebRTC applications require more memory, especially for high resolutions and new codecs; optimization has become essential

- Voice AI continues to develop rapidly in the cloud, while on-device processing faces memory constraints

- Long-term improvements in production capacities, memory optimization, and increasing demand will shape the future of tech

Table of contents

The price of memory in 2026

In January this year, my son came to me, complaining that his PC is too slow. Apparently, the benchmark for slowness to him was the ability to run Baldur’s Gate properly. Calling my trusted PC-guy, he suggested I upgrade the CPU and GPU while leaving the rest of that PC (which was purchased from him a few years back) intact.

I wondered if it would be better to wait a few months for a shiny new NVIDIA GPU. He said “do it now”. So I did. That same day, I made the purchase. Two days later, I came with the PC for the upgrade. That’s when prices rose by 50% for GPUs… that purchase was a timely one.

Fast forward another month, I needed to go to my PC guy for something else. In the small talk we had, he told me that memory and storage prices skyrocketed and are now 3-6 times higher than they were just two months ago.

He had plans of going out from his basement office to a real office space. He changed plans to renting the basement and moving “upstairs” and into the house and splitting the warehouse with his partner instead.

His future held downsizing. He’s been on the market for the last 20 years, and this is his stance:

- We’re in for troubled times for PC purchasing due to the price hikes

- This means less business

- A lot of stores are going to go bankrupt and close shop

- He is downsizing with the intent of staying alive and being there when the market goes back up again

- His timeline is to wait it out for the next two years. It might take less, but today, he is planning for two years

The large consumer brands apparently lost their ongoing contracts with storage and memory suppliers. Some broke their contracts (with its associated fines) because the AI vendors and IaaS hyperscalers simply purchased all their inventory for the year and even later into the future.

This thing is going to affect everyone, which leads me to how this will affect WebRTC and the future of Voice AI – because I am sure it will.

Memory prices by the numbers

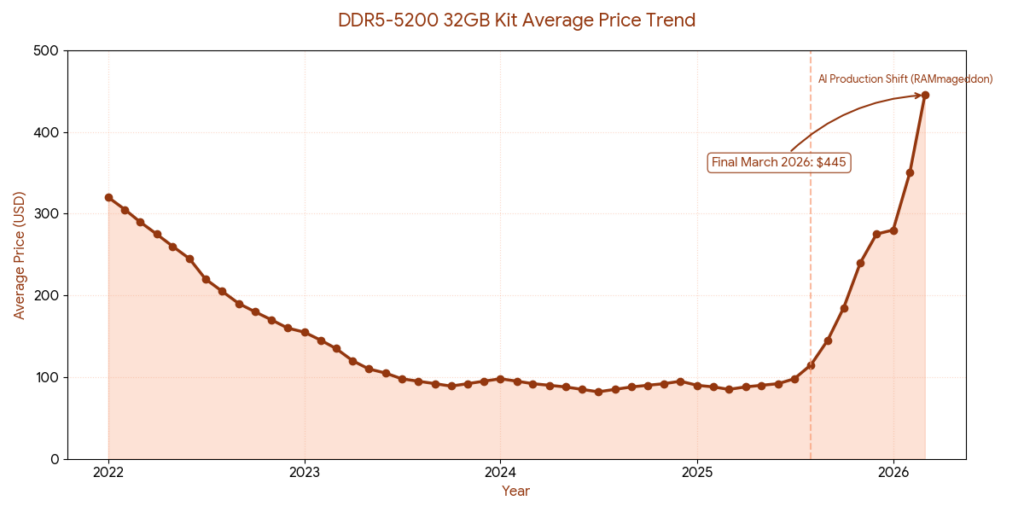

The graph above illustrates the change in average price of computer memory – the DDR5-5200 32GB kind.

DDR5 was released in July 2020. The downward price we see in the chart until stabilizing at around the $100 mark was expected to continue towards the future, until its replacement by DDR6 (not yet here). What happened though, was that AI shifted all production towards its own memory requirements – RAMmageddon. We see the hockey stick effect that started mid-2025 and is becoming a serious problem.

Here’s where we’re at:

- Panic buying: As prices cleared the $400 mark in mid-March, panic buying from system integrators and individual builders depleted remaining channel inventory. The month-end average hit $445

- Production dominance: Samsung and SK Hynix remain almost exclusively dedicated to HBM3e/HBM4 for AI accelerator backlogs. No industry signals suggest a return to significant consumer DDR5 production before Q3 2026

- Secondary market volatility: The $445 figure is the retail average for in-stock items. On secondary markets like eBay, high-performance kits (6400MHz+) regularly exceed $600

Memory and prices in the news

I lined this one up for writing for quite some time now. So I added to my daily Feedly read the part of collecting relevant tidbits and news stories. Here are a few of them – so you know this is real (if you haven’t purchased a device in the past 3-4 months):

Consumer Devices: The supply constraints and “RAM shortage” are so critical that Phison’s CEO warned the issue could “kill products and companies“. This has already been seen in the consumer market, with Valve’s Steam Deck OLED running out of stock due to the “memory, storage, and RAM crisis”. Furthermore, the lack of component capacity may be why Google’s Pixel 10a is reportedly the “same damn phone as the Pixel 9a“, suggesting product stagnation due to component constraints.

Storage Sector: The scarcity extends to storage components, with Western Digital reporting that it has “no more HDD capacity left“. The resulting solid-state drive (SSD) shortage has had such a profound effect that Sony has shut down nearly its entire memory card business.

Enterprise Impact: Major technology corporations are also reporting the financial strain. HP, for example, indicated that the rising cost of DRAM and flash was a factor in their Q1 2026 results, while general news reports continue to confirm a pattern of “memory price hikes“,

This market shift means the industry can no longer count on newer devices having better memory. Optimizing memory use in WebRTC and Voice AI is now urgent.

Memory use in WebRTC & Voice AI

WebRTC is a memory hog. Especially when using video channels.

The video encoder and decoder consume large amounts of memory in their media pipeline – there’s a need to hold the encoded bitstream (commonly megabit or more per second of traffic in each direction). Then there’s the raw video frames prior to encoding them and the decoded video frames – both inputs and outputs of a video codec’s operation.

Sophisticated codecs have multiple dependencies across frames and may need to “remember” more than a single frame back to operate properly.

Jitter buffers store packets received until they can be processed and played to the user.

All that buffering and processing takes up memory on the device of the user.

- The higher the video resolution. The more memory is needed.

- The bigger the frame rate. The more memory is needed.

- The newer the codec used. The more memory is needed.

- The larger the group meeting. The more memory is needed (usually).

As a general rule, as time goes by, WebRTC implementations are requiring more memory to operate because we ask them to do more. This only gets reduced when an optimization effort takes place – like the one Google placed on WebRTC during the pandemic.

For our industry?

Higher memory prices means we can’t assume devices in the next year or two will have better memory profiles. We need to make do with what we have and optimize for memory use more than we would have otherwise.

WebRTC, video conferencing and the strive for better quality

When I started working in the video conferencing industry a gazillion years ago (27, but who’s counting)… getting to a VGA resolution was a challenging feat. We did things on dedicated hardware with real time operating systems.

Fast forward to today, we run video conferencing on any conceivable device, running any type of operating system. The resolutions we’re aiming for start at 720p but easily go up to 4K and 5K in screen sharing.

For cloud gaming, we want 1080p or even 4K.

There are talks about 8K (thankfully, not in our industry yet).

We want to get higher resolutions, higher frame rates in an ongoing and never ending effort to get better media quality.

Is this going to stop? At some point. But not yet.

Here’s the thing though. In 2026 and into 2027, it might be time to squeeze more media quality out of existing resolutions and bitrates. So that we can get that higher experience to existing and lower end devices.

And the compelling reason for that is going to be the fact that newer devices might not come with higher memory numbers. They might even be coming with lower memory – just to keep the costs under some control.

Voice AI – hypergrowth we haven’t seen for a long time

Voice AI isn’t stopping for anyone.

If you’ve been following WebRTC since its inception, some 15 years ago, you probably don’t remember anything like that.

When WebRTC launched, there was certainly excitement, hype, and growth. But Voice AI makes those early days of WebRTC look like child’s play. There are so many vendors developing groundbreaking technology in this space. It’s nearly impossible to keep up with the breakneck pace of daily evolution.

All Voice AI and Video AI (avatars and digital humans) are currently running in the cloud, consuming insane amounts of GPU and memory resources. This is only going to accelerate and grow. In a way, we’re putting more fuel into the fire 🔥

And no. This isn’t going to stop because of memory shortages. Remember that all this goodness is taking place in cloud compute and not on devices – whatever comes out of this is running across all devices at all times.

In a way, we don’t need to upgrade our devices to support this brave new world of Voice AI… we just need a beefier cloud. That’s the same cloud that is buying all available memory production capacity for years to come…

Our near term future

Time to check what’s next. What does all this mean for us mere creators who are trying to build their next WebRTC application. How is this going to affect us in the near future?

Here are my two cents to add into this discussion.

With WebRTC, it is all about baby steps

We’ve seen Zoom doubling down on high end when it comes to video conferencing. It announced an Enhanced Media Add-On. This includes:

- Higher frame rate: 1080p60fps participant video streams

- Higher bit rate: Increase participant video stream quality

- 1080p content sharing at 60fps: enables a smoother sharing experience

- High bandwidth mode: Increases client downlink from 30Mbps to 100Mbps

- High quality video layout: In gallery view on 4K displays

All this goodness requires… well… more memory. Especially on the client devices that need to process it, but not only there.

Google? You can often now find 1080p video resolutions in Google Meet sessions. Up from 720p of the “past”.

For the rest of us? I’d say focusing on sub-1080p resolutions and doubling down on AV1 video encoding is where I’d be.

Here’s why:

- Higher resolutions require more resources

- While great, I am not sure the extra costs on your infrastructure is worth it in 2026

- Your users are unlikely to upgrade their devices as much as they did in the past 5 years. Expect the same capabilities they had up until now to remain roughly the same in the end of this year as well

- AV1 is where video coding is headed

- It requires less bitrate, but does put a higher strain on memory for encoding and decoding versus older video codecs

- It is where you can improve quality in a way that higher resolutions can’t, and it is where I’d put my efforts now in 2026

Voice AI to keep workloads in the cloud

For Voice AI, we will see more of the same. Huge strides forward in technology advancement and adoption.

What we will start seeing is experimentation of running voice AI on the edge – in the devices themselves. Instead of pushing voice towards the cloud, an attempt to do speech-to-text processing directly on the device. This will be counter to the trend of multi-modal-LLMs that are introducing direct speech-to-speech models.

Which one will win? The cloud. For now.

To run these types of models on devices would require further optimization and downsizing of model size – but it will also require more memory in these devices 👉 not in 2026.

Longer term

3 things are going to happen longer term. They are important, but all of these will take time to mature:

- Production capacities will increase. This will mean higher supply which will lower the price points back to sanity. Assume at least 2 years for this to happen. That’s what my PC-guy is estimating and he is the best in the market – I trust him

- Optimization in memory use. AI takes a lot of memory. Making it take less is important. Google is here already, which means others are investing in it as well

- Increased demand. We’re only starting out with Voice AI. As deployments start ramping up, demand will increase and with it demand for memory – in the cloud – something that is already factored in the prices, but might need adjustments

FAQ

AI companies – led by the hyperscalers and GPU vendors – are buying up all available memory production capacity for HBM and high-bandwidth chips. Samsung and SK Hynix shifted nearly all production to AI-grade memory, leaving consumer DDR5 in short supply. Prices jumped 3-6X in just a few months.

Higher video resolutions, newer codecs like AV1, and larger group calls all require more memory on the device. Jitter buffers, raw video frames, and encoded bitstreams all consume memory. When devices can’t be upgraded, developers need to optimize within existing constraints.

Not in 2026. Running speech-to-speech models on devices requires significant memory and processing power. With memory prices climbing, device upgrades are slowing down. Cloud-based Voice AI will remain dominant for now, which adds more demand pressure on the same memory supply chain.

Balancing for performance of low and mid-range devices

Here’s where I think your focus should be when thinking about media quality and optimization.

This year, if you aren’t exclusively targeting the high end of devices, aim for the performance in the low and mid-range. Assume that users won’t switch devices and won’t upgrade unless they must.

Now, more than ever, you should think about making do with what’s available and optimizing for it.