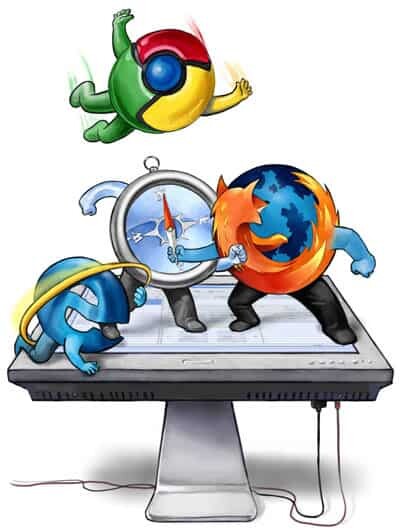

Companies care little about standards. Unless it serves their selfish objectives.

The main complaint around WebRTC? When is Apple/Microsoft going to support it.

How can that be when WebRTC is being defined by the IETF and W3C? When it is part of HTML5?

WebAssembly

We learned last week on a brand new initiative: WebAssembly. The concept? Have a binary format to replace JavaScript, act as a kind of byte-code. The result?

- Execute code on web pages faster

- Enable more languages to “run” on web pages, by compiling them to this new byte-code format

If the publication on TheNextWeb is accurate, then this WebAssembly thing is endorsed by all the relevant browser vendors (that’s Google, Apple, Microsoft & Mozilla).

WebAssembly is still just a thought. Nothing substantiate as WebRTC is. And yet…

WebAssembly yes and WebRTC no. Why is that?

Why is that?

Decisions happen to be subjective and selfish. It isn’t about what’s good for the web and end users. Or rather, it is, as long as it fits our objects and doesn’t give competitors an advantage or removes an advantage we have.

WebAssembly benefits almost everyone:

- It makes pages smaller (binary code is smaller than text in general)

- It makes interactive web pages run faster, allowing more sophisticated use cases to be supported

- It works better on mobile than simple text

Google has no issue with this – they thrive on things running in browsers

Microsoft are switching towards the cloud, and are in a losing game with their dated IE – they switched to Microsoft Edge and are showing some real internet in modernizing the experience of their browser. So this fits them

Mozilla are trying to lead the pack, being the underdog. They will be all for such an initiative, especially when WebAssembly takes their efforts in asm.js and build assets from there. It validates their credibility and their innovation

Apple. TechCrunch failed to mention Apple in their article of WebAssembly. A mistake? On purpose? I am not sure. They seem to have the most to lose: Better web means less reliance on native apps, where they rule with current iOS first focus of most developers

All in all, browser vendors have little to lose from WebAssembly while users theoretically have a lot to gain from it.

WebRTC

With WebRTC this is different. What WebRTC has to offer for the most part:

- Access to the camera and microphone within a web browser

- Ability to conduct real time voice and video sessions in web pages

- Ability to send arbitrary data directly between browsers

The problem stems from the voice and video capability.

Google have Hangouts, but make money from people accessing web pages. Having ALL voice and video interactions happen in the web is an advantage to Google. No wonder they are so heavily invested in WebRTC

Mozilla has/had nothing to lose. They had no voice or video assets to speak of. At the time, most of their revenue also came from Google. Money explains a lot of decisions…

Microsoft has Skype and Lync. They sell Lync to enterprises and paid 8.5 billions for Skype. Why would they open up the door to competitors so fast? They are now headed there, making sure Skype supports it as well

Apple. They have FaceTime. They care about the Apple ecosystem. Having access to it from Android for anything that isn’t a Move to iOS app won’t make sense to them. Apple will wait for the last moment to support it, making sure everyone who wishes to develop anything remotely related to FaceTime (which was supposed to be standardized and open) have a hard time doing that

All in all, WebRTC doesn’t benefit all browser vendors the same way, so it hasn’t been adopted in the same zealousness that WebAssembly seems to attract.

Why is it important?

Back to where I started: Companies care little about standards. Unless it serves their selfish objectives.

This is why getting WebRTC to all browser vendors will take time.

This is why federating VoIP/WebRTC isn’t on the table at this point in time – the successful vendors who you want to federate with wouldn’t like that to happen.

“This is why federating VoIP/WebRTC isn’t on the table at this point in time”

I disagree with that statement. I think federating VoIP is not on the table because fortunately it is not needed anymore and doesn’t provide enough benefits for users. I recommend this presentation from Rosenberg in IETF some years ago:

http://www.ietf.org/proceedings/80/slides/plenaryt-5.pptx

Funny. Most times people state they disagree with me because they think federation is so darn important.

Rosenberg’s reasoning is just fine – I agree with it all. Others find it easy to try and fix the technical issues, but the thing is – there’s no real incentive as at the end of the day, even if technically it would work – most big islands won’t allow it.

I totally agree on the reasons why federation currently does not work.

But I disagree that this is a good thing, for users. But they don’t care.

I have some respect for Apple for sticking with their guns and having some discipline to their strategy (although I don’t use their stuff), but Microsoft just confuses me: you just don’t know what they’re going to do and they seem to end up with mixed solutions that implement some things but not others, not to mention being late in the game with just about everything. It actually requires more effort to stick with Apple and Microsoft stuff. Interesting that they both have a user base that is related to hardware devices, rather than focusing on ‘open’ services.

Hey Tsahi!

Will WebAssembly have the same capabilities as WebRTC?

* Access to the camera and microphone within a web browser

* Ability to conduct real time voice and video sessions in web pages

* Ability to send arbitrary data directly between browsers

Cheers!

Jose,

The two are orthogonal in the browser.

WebAssembly comes as a replacement to the JavaScript interpreting happening in browsers today, with an intent on improving performance of interactive web sites and web apps. WebRTC is a media engine mechanism built into the browser.

So the answer is that WebRTC and WebAssembly don’t have the same capabilities and they aren’t even targeting the same problem domain.