Because we can.

I think the people who defined WebRTC are historians or librarians. I say this all the time: WebRTC brings practically no new technology with it. It is a collection of existing standards, some brought from the dead, coupled together to create this thing called WebRTC. It is probably why many VoIP vendors fail to understand the disruption of it.

If you want to learn more about the challenges of group calling in WebRTC, then this free 5-part video course on Mastering Group Call Performance in WebRTC SFUs is exactly for you.

Before I digress, let me get back on track here:

Data Channel is a part of WebRTC. It is a neglected part by most developers. It is awesome.

Oh. And it runs on top of this no name protocol called SCTP.

What is SCTP?

SCTP Stands for Stream Control Transport Protocol. It is an IETF standard (RFC 4960). And it is old. From 2000. That's when I had a full year of experience in VoIP. H.323 was the next big thing. SIP was mostly a dream. Chrome didn't exist. Firefox didn't exist either. We're talking great grandparents type old in technology years.

SCTP sits somewhere between TCP and UDP - it is a compromise of both, or rather an improvement on both. It also has a feature called multi-homing which isn't used in WebRTC (so I'll ignore it here).

I remember some time around 2005 or so, we decided at RADVISION to implement SCTP. I don't recall i this was for SIP or for Diameter. The problems started when we looked for an SCTP implementation. None really existed in the operating system. We ended up implementing it on our own, on top of raw sockets - not the best experience we had.

Fast forward to 2014. SCTP is nowhere to be found. Sure - some SIP Trunks have it. A Diameter implementation or two. But if you go look at Wikipedia for SCTP, this is what you get once you filter the list for relevant operating systems:

- Generic BSD (with external patch)

- Linux 2.4 and above

- SUN Solaris 10 and above

- VxWorks 6.x and above (but not all 6.x versions)

- Nothing on Windows besides third parties

Abysmal for something you'd think should be an OS service.

Mobile? It is there on Android, but someone needs to turn the lights on when you compile Android and enable it. On iOS? Meh.

Where SCTP is used in WebRTC

SCTP is never used. And when it does, it is by the VoIP community and for server-to-server communications. But now in WebRTC, it is used for peer-to-peer arbitrary data delivery across browsers.

Why on earth?

The answer lies in the word arbitrary. The data channel is there for use cases we have no clue about. Where we don't really know the types of requirements.

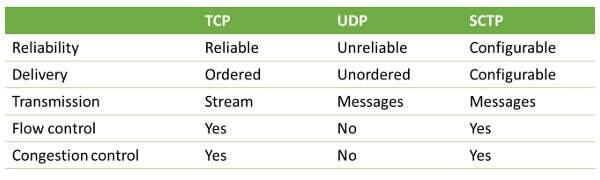

I'll try to explain it with the table below that I use in my training sessions:

- Reliability - if I send a packet - do I have the confidence (acknowledge) it was received on the other end?

- Delivery - if I receive 2 packets - am I sure the order they were received is the order in which they were sent?

- Transmission - am I sending packets or an endless stream of bytes?

- Flow control / congestion control - is the protocol itself acts responsibly and deals with congested networks on its own?

For SCTP, reliability and delivery are configurable - I can decide if I want these characteristics or not. Why wouldn't I want them? Everything comes at a price. In this case latency, and assumptions made on my use case.

So there are times I'd like more control, which leans towards UDP. But I also want to make life for developers, so I'd rather hint to the protocol on my needs and let him handle it.

Similarly, here's a great post Justin Uberti linked to a few weeks back - it is on reasons some game developers place their signaling over UDP and not TCP. Read it to get some understanding on why SCTP makes sense for WebRTC.

The Data Channel is designed for innovation. And in this case, using an old and unused tool was the best approach.