There’s more to WebRTC than voice and video calling. You can even use it to send files across browsers.

[Hadar Weiss (@whadar) is CTO and Founder at Peer5 which runs sharefest.me. I’ve had several interesting chats with him, and I wanted to have him here as a guest. In this first post by Hadar, he will explain how to use the WebRTC data channel to send a file.]

–

WebRTC Data (aka DataChannels / RTCDataChannel) API is letting developers transmit arbitrary data directly between two users (P2P) in ultra low latency. This is something that was not possible up until recently, and it’s a game changer. Why? Because in the next few years it will be an important building block for building web applications.

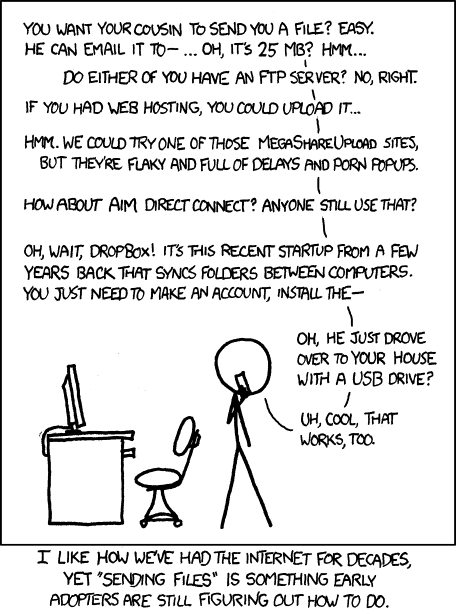

The most obvious, and talked about applications are web conferencing and file sharing. Traditionally, file-sharing and video chat were built using P2P technology that relied on proprietary clients (BitTorrent, Skype). Due to several limitations, it was the best (or the only) choice for the mainstream. But once WebRTC matures, the natural, long-awaited evolution (not revolution in my opinion) will prevail.

Being on the web brings so many powerful advantages. The web is standard, more updatable, accessible, searchable, embeddable and is just plain awesome.

But the best part is that being a p2p web application means that your app can work out-of-the-box from any modern browser (now more than 1B endpoints) without installation.

Think about it for a moment… 1,000,000,000+ endpoints.

But there are few things that need to happen before this dream come true. For once, WebRTC Data API is still very limited, incomplete and fairly unsupported by browsers. For example, Chrome and Firefox cannot send data to each other. Binary data is not yet supported on Chrome, but only on recent Canary versions with the inclusion of SCTP. Safari and IE are still with no clear roadmap for WebRTC.

The second obstacle is that many essential complementary services are still under development and maturing – Signaling, NAT traversal and fault tolerant backends. And when doing many-to-many file sharing there’s more stuff you need to take care of which relate to distributed computing – matching of the peers, load distribution, data authentication, streaming throttling and resource preservation. But wait, we’ll get to this on another blog post…

Let’s dive into how data API works and what it takes from a web developer to build basic one-to-one filesharing app. Although there’s an additional complexity of dealing with files, I’m going to use Sharefest as a reference, since it’s a real world, open source project that represents a familiar use case – sending a large file to somebody.

Sharefest works using many-to-many swarming algorithm (or mesh network), where more than two users (peers) can consume a file efficiently. I will try to ignore this and focus on the actual steps that are necessary even in the simplest 1-to-1 scenario.

Step 1: Read the file into JS space

Your file is in your filesystem and we need to make it accessible in your browser, so we can start using it. File API for the rescue:

In the index.html we have

<input onchange="addFiles(this.files)" type="file" id="files" name="files[]" multiple>When clicking (or dragging) files, addFiles will be called with the selected files metadata.

Currently in Sharefest, we are only dealing with one file at a time (help us to improve this), so we just read the first file:

var file = files[0];

var reader = new FileReader();

…

reader.onloadend = function(evt) {

//if needed read next slice

…

// else:

updateList(files);

var fileInfo = new peer5.core.protocol.FileInfo(null, file.size,

file.name, file.lastModifiedDate, file.type);

//tell server about our new file - only metadata!

sharefestClient.upload(fileInfo);

}

//start reading file

blob = file.slice(sliceId * sliceSize, (sliceId + 1) * sliceSize);

reader.readAsArrayBuffer(blob);Step 2: Chunkify (and possibly also blockify)

We were able to read the file in JS, but it doesn’t mean that we can send it with the Data API. The API doesn’t split large chunks of data, and requires the developer to do this instead. From the specs, the MTU size is defined to be 1280 bytes. From our experiments, it is better to send packets under 1200 bytes to make sure there are no drops. We call these atomic pieces of data, chunks.

So basically all we have to do is to slice our long file into these small chunks.

binarySlice.slice(start, start +1200, binarySlice.byteLength));It is useful to have another level of data encapsulation, blocks, which contain multiple chunks. Block-level verification and availability bitmap of blocks (which is sent to other peers) is sufficient and saves us a lot of bandwidth and CPU (VS. if we had only chunks). Our blockMap abstracts the blocks and helps to read and write the chunks to local storage.

var blockId = blockMap.setChunk(this.chunkRead, newChunk);(or blockMap.get(swarmId).getChunk(chunkId) to write it on the receiver side)

Step 3: Signalling

Our sender has its file split into chunks, and is now ready to be connected to other peers. But as you know, you need a server in the middle to initiate the connection. We use node.js and ws for simple and effective signalling. This area was covered in many other blogs and I encourage you to read about all nuances.

In the simple 1 to 1 sending scenario, the server will simply send the metadata encapsulated in a match object: sender.send(peerId, new protocol.Match(swarm.id, peer));

Where the Match class is:

function Match(swarmId,peerId,availabilityMap){

this.tag = exports.MATCH; //protocol tag

this.swarmId = swarmId; //the swarm that consists the two peers

this.peerId = peerId; //the matched peerid

this.availabilityMap = availabilityMap; //bitarray consisting available blocks

}Note that there are no network descriptors such as IP address. In the case of 1-to-1 sharing, using this class may be an overhead. We can usually assume a single swarm, peerId (GUID) doesn’t matter, and the availablilityMap would always be full or empty.

On the client side, we decode this message and create a PeerConnectionImpl which wraps the RTCPeerConnection object and create a coherent implementation to the client on both Firefox and Chrome. For example, this is how the instantiation of the actual PeerConnection object looks like:

if(window.mozRTCPeerConnection)

this.peerConnection = new this.RTCPeerConnection();

else

this.peerConnection = new this.RTCPeerConnection(

servers

,{ optional:[{ RtpDataChannels:true }]}

);Then we start the call:

this.peerConnection.createOffer(

this.setLocalAndSendMessage_,

function (err) {

peer5.debug('createOffer(): failed, ' + err)

},

this.createOfferConstraints);WebRTC (the browser) is going to call our setLocalAndSendMessage with the SDP https://hacks.mozilla.org/2013/07/webrtc-and-the-ocean-of-acronyms/#sdp

And we will wrap its SDP text with our protocol message:

var sdpMsg = new peer5.core.protocol.Sdp(thi$.originId, thi$.targetId, session_description);This will ensure the server will be able to route the SDP message to the correct target.

The answer is pretty much similar and also very similar to standard WebRTC flow (see createAnswer).

Step 4: ICE or just STUN

ICE stands for Interactive Connectivity Establishment. It enables P2P in various network conditions that involve NATs and firewalls. ICE incorporates STUN and TURN. While smart people can elaborate on this matter, I rather dumb it down to STUN = Real P2P, TURN = P2P Thru Relay.

In some scenarios, you may decide not to use TURN and restrict only to STUN. This will ensure no one is in the middle – which may improve speeds, security and operating costs.

for (var i = 0; i < stun_servers.length; ++i) {

servers.iceServers.push({url:"stun:" + stun_servers[i]});

}This servers list goes as param to RTCPeerConnection constructor.

Step 5: Actual P2P Data

Each chunk contains some metadata, and is encoded using our protocol:

function Data(swarmId, chunkId, payload) {

this.tag = exports.P2P_DATA;

this.swarmId = swarmId;

this.chunkId = chunkId;

this.payload = payload;

}Chunk are sent using this Data object:

var dataMessage = new peer5.core.protocol.Data(swarmId, chunkId, peer5.core.data.BlockCache.get(swarmId).getChunk(chunkId));

var packedData = peer5.core.protocol.BinaryProtocol.encode([dataMessage]);

this.peerConnections[peerID].send(packedData);BinaryProtocol.encode takes a data object and serialize it to UInt8Array. Until binary channel is available on stable browsers, we are forced to use hackish base64 encoding and send text instead of binary. This is how our wrapper handles it:

var message = this.useBase64 ? peer5.core.util.base64.encode(binaryMessage) : binaryMessage.buffer;

if (this.dataChannel.readyState.toLowerCase() == 'open') {

peer5.debug("sending data on dataChannel");

this.dataChannel.send(message);

} else {

peer5.info('DataChannel was not ready, setting timeout');

setTimeout(function (dataChannel, message) {

this.send(dataChannel, message);

}, PC_RESEND_INTERVAL, this.dataChannel, message);

}

}The receiver side is wired to handle these messages:

hookupDataChannelEvents:function () {

this.dataChannel.binaryType = 'arraybuffer';

this.dataChannel.onmessage = this.onMessageCallback_;

this.dataChannel.onopen = this.onDataChannelReadyStateChange_;

this.dataChannel.onclose = this.onDataChannelClose_;

}

this.onMessageCallback_ = function (message) {

peer5.debug("receiving data on dataChannel");

var binaryMessage = this.useBase64 ? peer5.core.util.base64.decode(message.data) : new Uint8Array(message.data);

radio('dataReceivedEvent').broadcast(binaryMessage, thi$.targetId);

}The dataReceivedEvent is published using radio.js and eventually written to the chunks dictionary (as described before).

Because it is one-to-one sharing and I wanted to keep it simple I have omitted some of our protocol message that control the flow of the transmission: HAVE, REQUEST, CANCEL which are implemented here.

Step 5.5: Optimizing speed

The data channel spec suggests both reliable and unreliable modes, Firefox implemented both and Chrome has unreliable implemented and plans to implement reliable. In order to optimize transfer speeds, unreliability is key. The latency overheads incorporated in reliable transport directly damage the speed in which data is transferred. Also, some applications don’t even need to reliably transfer data (i.e video conference).

We have planned from the beginning for unreliable data channels. But file transfer still needs to have the entire file and certainly all the chunks of the file. Understanding that, one solution is that the receiver side will need to request chunks that it doesn’t have and monitor those chunks in case the request wasn’t answered.

So we need a data structure to know which chunks are requested and haven’t yet arrived: p2pPendingChunks, so we won’t request them again and again. and we need to monitor and decide when a request has dropped, and then decide what to do with it.

setTimeout(this.expireChunks, expiration, swarmId, chunksIds, peerId);The expiration duration – how long we will wait for a request until we consider it dropped, can vary as a rule of thumb from tests we ran, we configured it to 1500ms. But it should be dynamic.

Another issue need to be taken care of is flow-control. The transfer rate over a certain channel between 2 peers is limited, and varies as congestion over that channel varies. Sending data over that channel in a rate higher than the current limit will cause packet loss and effectively slow down your user’s application, other applications and even other users. Data channels spec describes SCTP as the underlying flow-control mechanism. BUT (this is getting old already) Chrome hasn’t yet implemented it. Firefox has implemented it but they don’t expose the outgoing buffer, thus giving the application no feedback on the state of the channel.

Either way, whether Firefox or Chrome, the application needs to control its flow and we do it via the packet drop mechanism described above. Meaning, when there are lots of dropped packets the transfer rate should go down, and vice versa. The flow control mechanism we’ve implemented is a bit like TCP’s: first of all each request a receiver makes can contain many chunks, so each request can be answered with many DATA messages. second thing, we keep a window of the maximum number of pending chunks we allow at any given time, and monitor the number of pending chunks at any given time (remember the pendingChunks data structure).

So when a request is answered with no dropped chunks we will increase by 1 the size of the window. And when there are dropped chunks we will decrease the window by 2*(number of dropped chunks) and to no more than window/2, being a bit more promiscuous than TCP.

This heuristic worked for us quite well, but of course there’s room for improvement and there are many flow algorithms out there, e.g SCTP, which also change the expiration duration.

One last thing on this topic, since Chrome hasn’t yet implemented flow-control (as to version M29 and below) they hard coded a speed limitation to 30kb/s using the SDP ‘b’ parameter field. In order to remove that limitation we’ll need to deploy this little hack when handling the SDP signaling when opening a peer connection:

var split = sdp.split("b=AS:30");

if(split.length > 1)

var newSDP = split[0] + "b=AS:1638400" + split[1];

else

newSDP = sdp;

return newSDP;To understand more check out this issue: https://github.com/Peer5/ShareFest/issues/10 (thanks to Justin Uberti).

Step 6: Downloading to regular FS

It’s nice that we have all these data chunks in our JS, but unfortunately humans can’t use it. We need to “Download” (although it’s already local) it to the user and make it accessible:

saveFileLocally:function (blockMap) {

var array = new Uint8Array(blockMap.fileSize);

for (var i = 0; i < blockMap.getNumOfBlocks(); ++i) {

array.set(blockMap.getBlock(i), i * peer5.config.BLOCK_SIZE);

}

var blob = new Blob([array]);

saveBlobLocally(blob, blockMap.getMetadata().name);

},

function saveBlobLocally(blob, name) {

if (!window.URL && window.webkitURL)

window.URL = window.webkitURL;

var a = document.createElement('a');

a.download = name;

a.setAttribute('href', window.URL.createObjectURL(blob));

document.body.appendChild(a);

a.click();

};This is how Sharefest handles files, but we are changing it because creating large UInt8Array in memory is heavy and limited to several hundred MBs. But for the simple case the above method should be sufficient.

Few words on security

This basic scenario assumes both sides are authenticated and use encrypted client-server communication (we use HTTPS+WSS which are based on TLS). It is best practice to verify using SHA3 or other good hashing algorithm, that the file you’ve downloaded is really the file you should have. I’m going to elaborate more on that on a future post.

FAQ on WebRTC file sharing

Yes.

You can use WebRTC’s data channel to send any arbitrary data between two browsers without the need to have it visible to any web server.

Yes and no.

WebRTC will try to negotiate a direct connection between the two machines that are sharing the file. If it succeeds (usually 80%+ of the time), then no TURN server will be needed. If it doesn’t, then you will need a TURN server to route the media through it.

Hi,

is it also possible to stream an audiofile from one browser to many ?

How about streaming an audio file for nodejs to many clients using webrtc?

Maybe StreamRoot will can help you : http://www.streamroot.io/

Interesting, but it is not available yet no ?

Essentially what I like to do is building a radio station which streams long prerecorded mixes. To fake a radio it would be easy to provide a continuous incrementing start point which mimics a server side playlist.

Hi, I am one of streamroot.io co-founders.

We are currently in a beta phase, but should be able to help you with your issue !

What you ask is possible with our solution, don’t hesitate to contact us directly at contact@streamroot.io to discuss it.

With most tools it is pain in the bu** to transfer big files over the internet. Another option is to transfer with Binfer. It is able to transfer big files of any size.

In the interest of keeping up to date, indeed it was a pain in the bu**, but not impossible now at least!

There’s quite a few WebRTC based file transfer proofs online now with reasonable cross browser support.

http://reep.io for example is an open source WebRTC based WeTransfer-like service which works really smoothly, as I understand it, if people aren’t online to receive files, it holds them in waiting to be downloaded in a Postgres Database. These eventually expire. It’s open source too ( https://github.com/KodeKraftwerk/reepio)

http://send-anywhere.com is a Korean Start-up also based on WebRTC, they also have supporting Android/IOs apps and a new chrome extension. Apple has reportedly hired an engineer recently to implement webRTC into their Safari browser, I remain hopeful!

https://seshi.io is a WebRTC based file transfer site for instant transfer between friends/devices which uses IndexedDB for local storage rather than storing any files on a server. It came out of a University research project (Disclaimer, I’m the developer of it, although ‘developer’ is pushing it!)

Do you have this example all packaged together to be able to play with it or see it “all together?”

that would be lovely indeed

You can clone the repo – https://github.com/Peer5/ShareFest

Do you need something else?

This looks very nice, is there also a way to playback an audio file instead of initializing download?

I’ve been suspecting that people read this when they end up using RTP-based datachannels… the advice to pass { optional:[{ RtpDataChannels:true }]} as constraints is somewhat outdated.

http://webrtc.github.io/samples/src/content/datachannel/filetransfer/

shows the 2015 way to do it.

🙂

Philipp,

Thanks. I’ve been worried about this one, as it gets traffic on a daily basis. Care to write a guest post? I’ll forward readers of this one from the top of the page to the new one 🙂

Hi, why is the background dark gray and the text black? It is very difficult to follow the tutorial then

Fixed – thanks for pointing this one out Roland

Is it possible to transfer powerpoint slides?

You can transfer any type of data you see fit

I want to send data and streams to my client using webrtc, I am running webrtc server or static ip and clients run on dynamic ip.

I just wanted to know if suppose client dynamic IP change while sending data, client will receive data or not, connect still exists or lost.

Paresh,

If the client changes its IP address the WebRTC connection will be severed. In such a case, you will need to conduct an ICE restart procedure or to connect a new peer connection.