WebRTC H.264 hardware acceleration is no guarantee for anything. Not even for hardware acceleration.

There was a big war going on when it came to the video codec in WebRTC. Should we all be using VP8 or should we be using H.264? A lot of digital ink was spilled on this topic (here as well as in other places). The final decision that was made?

Both VP8 and H.264 became mandatory to implement by browsers.

Fast forward to today, and you have this interesting conundrum:

- Chrome, Firefox and Edge implement VP8 and H.264

- Safari implements H.264. No VP8

Leaving aside the question of what mandatory really means in English (leaving it here for the good people at Apple to review), that makes only a fraction of the whole story.

There are reasons why one would like to use VP8:

- It has been there from the start, so its implementation is highly optimized already

- Royalty free, so no need to deal with patents and payments and whatnot. I know there’s FUD around patents in VP8, but for the most part, 100% of the industry is treating it as free

- It nicely supports simulcast, so quite friendly to video group calling scenarios

There are reasons why one would like to use H.264:

- You already have H.264 equipment, so don’t want to transcode – be it cameras, video conferencing gear or the need to broadcast via HLS or RTMP

- You want to support Safari

- You want to leverage hardware based encoding and decoding to increase battery life on your mobile devices

I want to open up the challenges here. Especially in leveraging hardware based encoding in WebRTC H.264 implementations. Before we dive into them though, there’s one more thing I want to make clear:

You can use a mobile app with VP8 (or H.264) on iOS devices.

The fact that Apple decided NOT to implement VP8, doesn’t bar your own mobile app from supporting it.

WebRTC H.264 Challenges

Before you decide going for a WebRTC H.264 implementation, you should need to take into consideration a few of the challenges associated with it.

I want to start by explaining one thing about video codecs – they come with multiple features, knobs, capabilities, configurations and profiles. These additional doozies are there to improve the final quality of the video, but they aren’t always there. To use them, BOTH the encoder and the decode need to support them, which where a lot of the problems you’ll be facing stem from.

#1 – You might not have access to a hardware implementation of H.264

In the past, developers had no access to the H.264 codec on iOS. You could only get it to record a file or playback one. Not use it to stream media in real time. This has changed and now that’s possible.

But there’s also Android to contend with. And in Android, you’re living in the wild wild west and not the world wide web.

It would be safe to say that all modern Android devices today have H.264 encoder and decoder available in hardware acceleration, which is great. But do you have access to it?

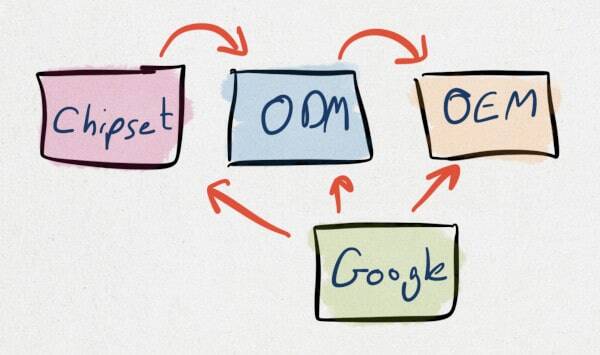

The illustration above shows the value chain of the hardware acceleration. Who’s in charge of exposing that API to you as a developer?

The silicon designer? The silicon manufacturer? The one who built the hardware acceleration component and licensed it to the chipset vendor? Maybe the handset manufacturer? Or is it Google?

The answer is all of them and none of them.

WebRTC is a corner case of a niche of a capability inside the device. No one cares about it enough to make sure it works out of the factory gate. Which is why in some of the devices, you won’t have access to the hardware acceleration for H.264 and will be left to deal with a software implementation.

Which brings us to the next challenge:

#2 – Software implementations of H.264 encoders might require royalty payments

Since you will be needing a software implementation of H.264, you might end up needing to pay royalties for using this codec.

I know there’s this thing called OpenH264. I am not a lawyer, though my understanding is that you can’t really compile it on your own if you want to keep it “open” in the sense of no royalty payments. And you’ll probably need to compile it or link it with your code statically to work.

This being the case, tread carefully here.

Oh, and if you’re using a 3rd party CPaaS, you might want to ask that vendor if he is taking care of that royalty payment for you – my guess is that he isn’t.

#3 – Simulcast isn’t really supported. At least not everywhere

Simulcast is how most of us do group video calls these days. At least until SVC becomes more widely available.

What simulcast does is allows devices to send multiple resolutions/bitrates of the same video towards the server. This removes the need of an SFU to transcode media and at the same time, let the SFU offer the most suitable experience for each participant without resorting to lowest common denominator type of strategies.

The problem is that simulcast in H.264 isn’t available yet in any of the web browsers. It is coming to Chrome, but that’s about it for now. And even when it will be, there’s no guarantee that Apple will be so kind as to add it to Safari.

It is better than nothing, though not as good as VP8 simulcast support today.

#4 – H.264 hardware implementations aren’t always compatible with WebRTC

Here’s the kicker – I learned this one last month, from a thread in discuss-webrtc – the implementation requirements of H.264 in WebRTC are such that it isn’t always easy to use hardware acceleration even if and when it is available.

Read this from that thread:

Remember to differentiate between the encoder and the decoder.

The Chrome software encoder is OpenH264 – https://github.com/cisco/openh264

Contributions are welcome, but the encoder currently doesn’t support either High or Main (or even full Baseline), according to the README file.

Hardware encoders vary greatly in their capabilities.

Harald Alvestrand from Google offers here a few interesting statements. Let me translate them for you:

- H.264 encoders and decoders are different kinds of pain. You need to solve the problem of each of these separately (more about that later)

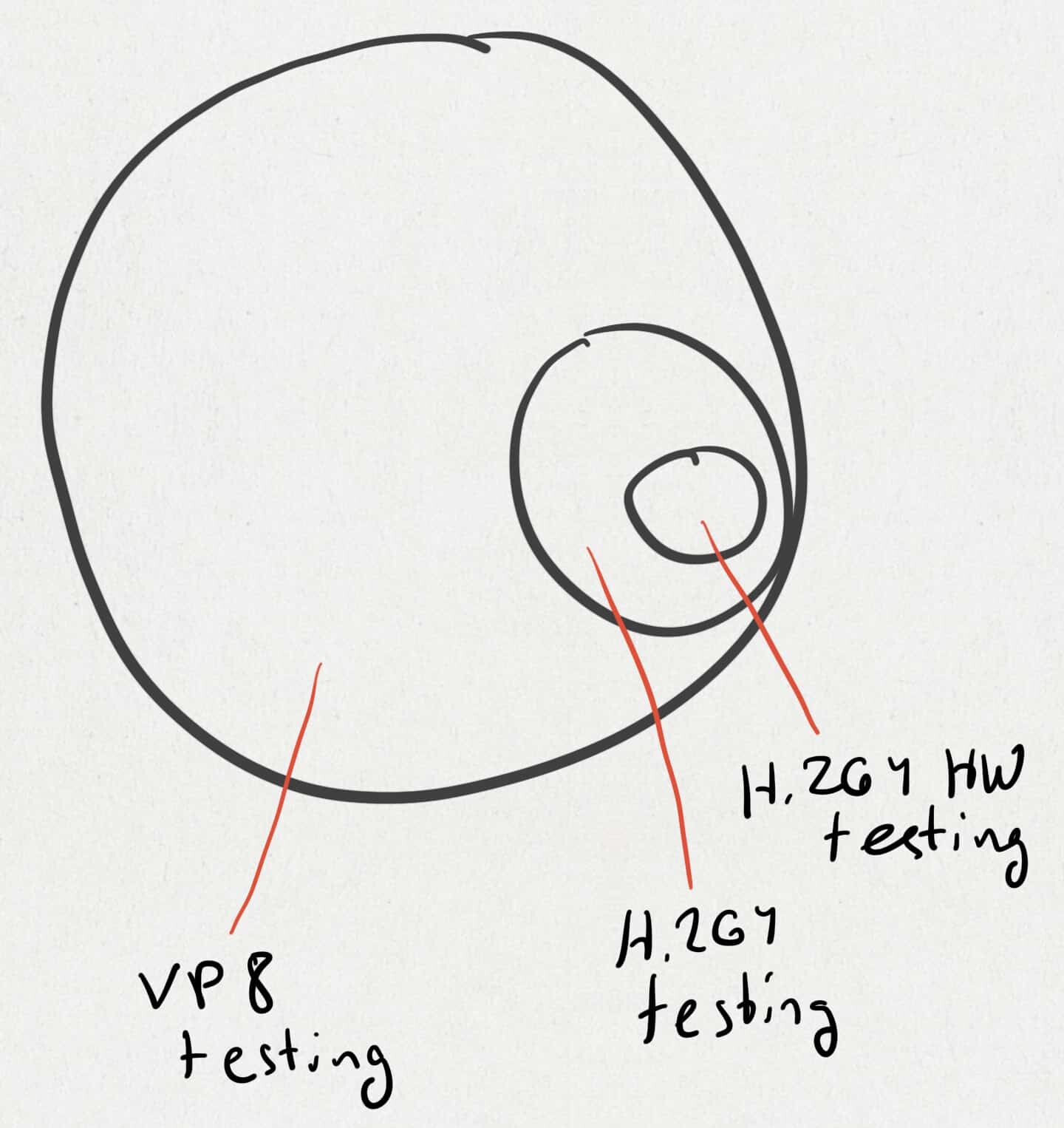

- Chrome’s encoder is based on Cisco’s OpenH264 project, which means this is what Google spend the most time testing against when it looks at WebRTC H.264 implementations. Here’s an illustration of what that means:

- The econder’s implementation of OpenH264 isn’t really High profile or Main profile or even Baseline profile. It just implements something in-between that fits well into real time communications

- And if you decide not to use it and use a hardware encoder, then be sure to check what that encoder is capable of, as this is the wild wild west as we said, so even if the encoder is accessible, it is going to be like a box of chocolate – you never know what they’re going to support

And then comes this nice reply from the good guys at Fuze:

@Harald: we’ve actually been facing issues related to the different profiles support with OpenH264 and the hardware encoders. Wouldn’t it make more sense for Chrome to only offer profiles supported by both? Here’s the bad corner case we hit: we were accidentally picking a profile only supported by the hardware encoder on Mac. As a result, when Chrome detected CPU issues for instance, it would try to reduce quality to a level not supported by the hardware encoder which actually led to a fallback to the software encoder… which didn’t support the profile. There didn’t seem to be a good way to handle this scenario as the other side would just stop receiving anything.

If I may translate this one as well for your entertainment:

- You pick a profile for the encoder which might not be available in the decoder. And Chrome doesn’t seem to be doing the matchmaking here (not sure if that true and if Chrome can even do that if it really wanted to)

- Mac’s hardware acceleration for the encoder of H.264, as any other Apple product, has its very own configuration to it, which is supported only by it. But somehow, it doesn’t at some point which kills off the ability to even use that configuration when you try to fallback to software

- This is one edge case, but there are probably more like it lurking around

So. Got hardware encoder and/or decoder. Might not be able to use it.

#5 – For now, H.264 video quality is… lower than VP8

That implementation of H.264 in WebRTC? It isn’t as good as the VP8 one. At least not in Chrome.

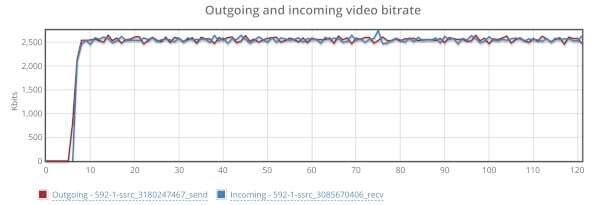

I’ve taken testRTC for a spin on this one, running AppRTC with it. Once with VP8 and another time with H.264. Here’s what I got:

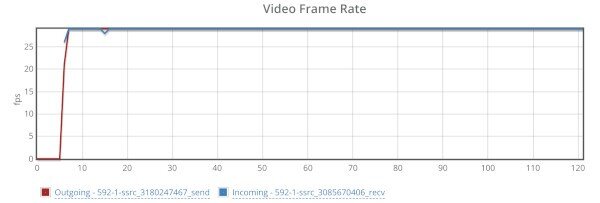

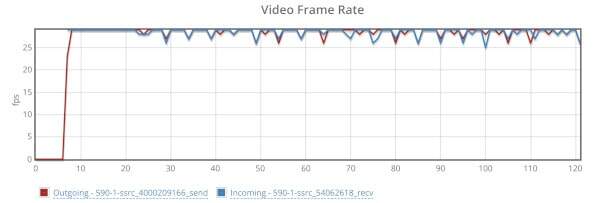

VP8

Bitrate:

Framerate:

H.264

Bitrate:

Framerate:

This is for the same scenario running on the same machines encoding the same raw video. The outgoing bitrate variance for VP8 is 0.115 while it is 0.157 for H.264 (the lower the better). Not such a big difference. The framerate of H.264 seems to be somewhat lower at times.

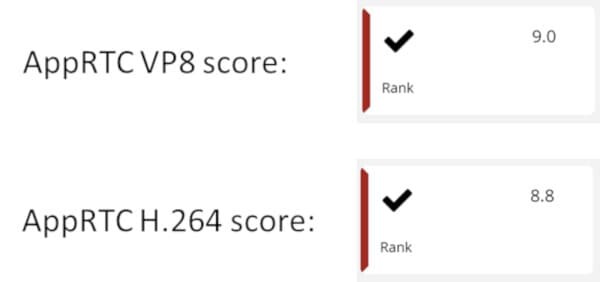

I tried out our new scoring system in testRTC that is available in beta on both these test runs, and got these numbers:

The 9.0 score was given to the VP8 test run while H.264 got an 8.8 score.

There’s a bit of a difference with how stable VP8’s implementation is versus the H.264 one. It isn’t that Cisco’s H.264 code is bad. It might just be that the way it got integrated into WebRTC isn’t as optimized as the VP8’s integration.

Then there’s this from the same discuss-webrtc thread:

We tried h264 baseline at 6mbps. The problem we ran into is the bitrate drastically jumped all over the place.

I am not sure if this relates to the fact that it is H.264 or just to trying to use WebRTC at such high bitrates, or the machine or something else entirely. But the encoder here is suspect as well.

I also have a feeling that Google’s own telemetry and stats about the video codecs being used will point to VP8 having a larger portion of ongoing WebRTC sessions.

#6 – The future lies in AV1

After VP8 and H.264 there’s VP9 and HEVC/H.265 respectively.

A WebRTC video codec war have started between HEVC and AV1 recently. I am siding with AV1 here – AV1, includes as its founding members Apple, Google, Microsoft and Mozilla (who all happen to be the companies behind the major web browsers).

The best trajectory to video codecs in WebRTC will look something like this:

Why doesn’t this happen in VP8?

It does. To some extent. But a lot less.

The challenges in VP8 are limited as it is mostly software based, with a single main implementation to baseline against – the one coming from Google directly. Which happens to be the one used by Chrome’s WebRTC as well.

Since everyone work against the same codebase, using the same bitstreams and software to test against, you don’t see the same set of headaches.

There’s also the limitation of available hardware acceleration for VP8, which ends up being an advantage here – hardware acceleration is hard to upgrade. Software is easy. Especially if it gets automatically upgraded every 6-8 weeks like Chrome does.

Hardware beats software at speed and performance. But software beats hardware on flexibility and agility. Every. Day. of. The. Week.

What’s Next?

The current situation isn’t a healthy one, but it is all we’ve got to work with.

I am not advocating against H.264, just against using it blindingly.

How the future will unfold depends greatly on the progress made in AV1 as well as the steps Apple will be taking with WebRTC and their decisions of the video codecs to incorporate into Webkit, Safari and the iOS ecosystem.

Whatever you end up deciding to go with, make sure you do it with your eyes wide open.

Looking to learn more about video codecs, but also WebRTC in general? Want to upskill in WebRTC and become an expert? I’ve got a set of courses that will suite your needs – some of them are even free

H.264 codec use is certainly with it’s challenges but absolutely has its place in WebRTC. When interfacing with video phones, the only available codec is H.264. I think a broader investigation into the performance characteristics of the browsers software implementations is warranted. We have seen Chromes H.264 browser-embedded software encoder/decoder begin exhibiting poor performance or even losing entire frames despite the host machine having more than adequate hardware. Chrome only officially supports a fragment of hardware accelerated platforms, and currently I believe it is restricted purely to Nvidia chipsets. Our efforts have been to uncover these H264 related video problems and to identify solutions. It is a juggling act between correctly configured buffers, SDP video options, the host machines capabilities, and the browsers codec implementation itself. It is a complex and delicate dance that can result in pristine video performance, or the more likely scenario, unusable video. Many factors contribute to this and I would love to have a much larger discussion on the topic to bring more attention to the codecs implementation, what can currently be done, and what needs to be done in the future to address compatibility and quality concerns.

Thanks John

H.264 definitely has its place in WebRTC. If somehow that part wasn’t understood then sorry for that 🙂

The thing is, that if you want to use H.264 in WebRTC today, then you better have a very good reason for doing it, and you better know what you’re doing and how to make it work.

Nice post Tsahi. The thing I found more interesting about H.264 is using it for 1:1 iOS calls. In that case you don’t need to worry about Android and OpenH264 and you get access to High Profile.

The fact that it is (supposed to be) used by Duo in that specific setup makes me feel it is worth the effort and it is tested/stable enough in that setup. Still it is not trivial to use and the battery savings can be not as big as they were supposed to be.

Just my 2c

As with the case of John, your 2c are worth their weight in pure gold 😉

Mind you that the VP8 realtime hardware requirements haven’t really changed since 2012:

https://www.webmproject.org/hardware/rtc-coding-requirements/

The page doesn’t say “simulcast” anywhere but the requirements ensure the encoder and decoder are capable of decoding what Chrome produces with simulcast including temporal scalability.

and VP8 hardware accelleration is around the corner for Intels Kaby lake processors: https://groups.google.com/a/chromium.org/forum/?utm_medium=email&utm_source=footer#!msg/blink-dev/vbYCDv5ve5w/pq-uV_QRAwAJ

Thanks Fippo 🙂

I think VP8 is a lot simpler. If you add it to your hardware, there’s only one reason for you to do so (or a main one) – WebRTC. And the way you test WebRTC today is by running it against Chrome.

H.264 is the swiss army knife of the current video codec generation, which means it gets pitted against many different use cases where WebRTC is but a minor niche.

As far as I understood, codec hardware acceleration today is manly accelerated components, algorithms and methods rather than complete codecs. So your GPU could do efficient transforms, motion vector estimation, etc which then can be used for acceleration of any codec (VP8/9 as well as H.).

With this methodology you also get the advantage of being able to update the CPU codec logic without replacing the accelerating hardware and without falling back to software-only processing.

Arik,

Thanks. Do note you are making the assumption all devices everywhere have GPU that is accessible to you with all the building blocks needed to get that codec implemented and that implementation is uniform in its feature set across all devices. Which is where things fall apart, even without the fact that many of these devices don’t accelerate video coding using a GPU in the first place.

Apparently the Edge team also doesn’t understand the meaning of “mandatory”. The underdog of WebRTC: Data Channels! 😉

How do these pieces of video support software and specifications for VP8, H.264 and WebRTC relate to the SpectrumTV website in Safari (it currently works – does not use VP8) and in Firefox (it does not work, since, v62.0, ~9/5/18 – uses VP8)

Safari is still at its infancy when it comes to WebRTC, so things tend to break there more in other places.

Who fixes stuff when things break? Should it be Safari or the other browser vendors? That’s not easy to answer.

As a developer, the question then becomes who do you care about more at the moment? Safari users or Firefox/other users?

Assuming iOS (not inside an app) is of high priority for you, then you’ll be opting for H.264 and make do with whatever quirks and limits Safari imposes on you. Otherwise, I’d just focus on VP8 if I were you.