Cloud Communication APIs.

[If you are new around here, then you should know I've been writing about WebRTC lately. You can skim through the WebRTC post series or just read what WebRTC is all about.]

API platforms fascinate me. Especially communication API platforms. You can’t get any bigger than Twilio these days. This year, they’ve announced and launched a slew of new capabilities - task routing, video calling, IP messaging and a lot of enhancements to their existing services.

I’ve been wanting to land an interview with Twilio for quite some time. I was happy when Al Cook, Director of Product Marketing at Twilio, obliged. Here’s what he had to say.

I’ve been wanting to land an interview with Twilio for quite some time. I was happy when Al Cook, Director of Product Marketing at Twilio, obliged. Here’s what he had to say.

What is Twilio all about?

Twilio is a cloud communications platform. We provide programmable building blocks that developers use to embed communications into their mobile and web apps - from voice, messaging, and video to authentication. So when you are communicating with your Uber driver via text or anonymous phone call, calling Hulu customer support, or shopping via text with the help of your Nordstrom personal shopper, that’s Twilio. Or to give a WebRTC example - when you call a customer support team powered by Zendesk, the agent is talking to you over a WebRTC connection powered by Twilio. We have over 700,000 developers generating over 50 billion API transactions a year. In WebRTC we’ve powered over half a billion minutes of WebRTC to date.

Twilio Video went to public beta today. You’ve been in private beta for a while. How is it going? What have you learned?

That’s right, the private beta started in May and we collaborated with developers to build the right solution, with the right developer experience. Video is in public beta as of now. Now anyone can sign up for immediate access to our WebRTC-powered web and mobile SDKs, and the cloud-based signaling/media services that power them.

During the private beta we onboarded several thousand developers from our base. This group size was critical for gaining useful feedback and insights, while still allowing meaningful interactions.

Interesting. Did you check what users do during the private beta?

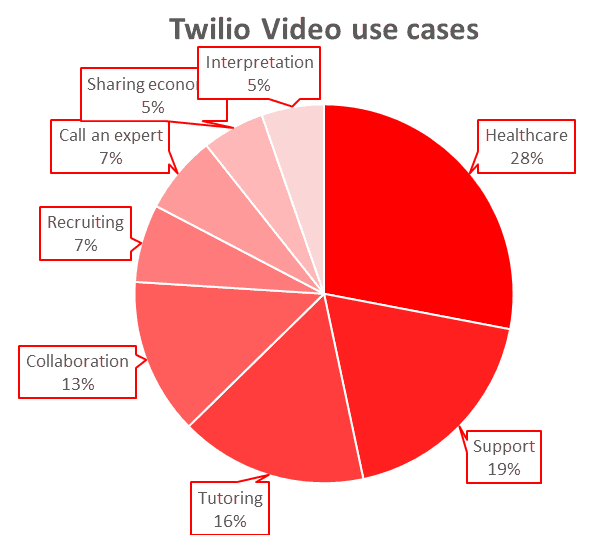

During the private beta onboarding, we asked participants to tell us about their use cases. I read every single entry and categorized the use cases. The top categories break out as follows:

- 21% healthcare

- 14% support (in-app enterprise customer support, visual customer support)

- 12% tutoring

- 10% collaboration

- 5% recruiting

- 5% call an expert

- 4% marketplace / sharing economy

- 4% interpretation services (including assistive deaf/blind services)

Two of the big areas we spent considerable time refining during the beta were improving the mobile media stack performance, and building a signaling model that allows us to continue to add new capabilities for multi-party, multi-endpoint IP and carrier communications.

I have to ask. These developers in the private beta - how many of them were existing Twilio developers who just added video versus new ones?

It’s a mix. A lot of folks are with us because they want multiple channels of communication, and so video is a natural extension for them. But we’ve also had a lot of people who were new to Twilio, and excited to have a better alternative than their current video solution.

How is your video offering different from other alternatives that are out there today?

We believe this solution is not available anywhere else. Here’s some insight on the areas where we invested the most time to ensure we were building the right solution for needs that had not been addressed.

- Without this, each communication capability would either have to be built from scratch or individually purchased and pieced together, if possible. And that’s just the beginning. Our SDKs are designed as a platform to add more communication channels over time.

- We designed a conversation model that scales in volume, use case and breadth of different endpoint types. Conversations can be either call-based or room-based; start peer-to-peer and move to network-mixed; and interoperate with SIP endpoints and carrier endpoints. Our signaling model is built to fulfill this vision. Some features are enabled today; others are coming. The important thing is we’ve laid the foundation for one platform that can power all communications needs.

- Our pricing makes it accessible to everyone, and to scale to the very largest deployments. Most video services require per user fees, which are expensive for starting-up and scaling. Twilio video is aimed at infrastructure level pricing where it’s faster and cheaper than building and operating your own service at any scale. And users get the benefit of our ongoing work to deliver high quality and resiliency.

What excites you about working in WebRTC?

To me, the most exciting aspect of WebRTC - and really programmable real-time communications more generally - is that it stands to fundamentally change the way we communicate. Through every iteration of the phone, the basic interaction hasn’t really changed. Historically, there has been little-to-no ability to gain immediate context of why the caller is calling, what they were doing beforehand, and what they may need. Embedding communications into applications allows for a far more meaningful and relevant communication. Imagine calling your car insurance company from your car insurance app following an accident, and instantly the call is routed with the right prioritization based on the GPS of your phone to an agent who speaks your prefered language. The app enables you to instantly share a video feed of the accident scene and collaboratively annotate the video using the app. All this while the agent captures the information in their record system to avoid a separate visit from a damage appraiser.

We believe every single app will have communications built into it. Every. Single. App.

Where do you see WebRTC going in 2-5 years?

WebRTC/ORTC is moving at such a velocity that 5 years out is pretty hard to forecast. But we believe:

- In this timeframe, browser support should be ubiquitous. We’ve seen Microsoft Edge get there already (barring video codec support), and we know Apple is working on it for Safari.

- Ubiquitous doesn’t mean standardized or non-contentious. We expect to continue to see differences in implementation of particular features that the developer will either have to keep track of and deal with directly, or use an SDK such as Twilio Video.

- Media quality requires continuous improvement. We’ll continue to make it better and more resilient to bad networks. However, in this timeframe, there will remain some networks that are not viable for real-time video.

- Mobile in-app usage will be the most important use case for consumers. This means that most consumers won’t be using Google’s latest WebRTC engine off the shelf, but rather a version that has been packaged - and often modified and enhanced - along the way.

- B2C Communications will focus on high-value, contextual interactions. Low-value B2C interactions will be increasingly handled through self-service channels. WebRTC will be one of the core technologies powering the high value segment.

If you had one piece of advice for those thinking of adopting WebRTC, what would it be?

Experiment - and think about how you scale the experiments that find success. It’s relatively simple to get a basic WebRTC call working. But plan for what happens if your new service finds success. Consider how will you scale, maintain and operate your TURN media relay. How will you collect and analyze voice quality diagnostics from all your endpoints. How will you interoperate with SIP networks and PSTN networks.

Given the opportunity, what would you change in WebRTC?

Some improvements have been addressed by ORTC. We’re big fans of these improvements and we look forward to the standards combining.

We would like more control over the media stack in a browser environment, if the browser makers could figure out a secure way to enable this. We spend a considerable amount time testing and measuring voice quality in impaired networks. In fact, we open-sourced the testing tool we use. On the mobile side, we operate the media stack and we do a lot of fine tuning to constantly improve the media quality. This includes taking into account the performance of different networks and hardware configurations. Whether it's adding codecs to use in particular scenarios, adding Forward Error Correction (FEC) techniques, or other areas we are working on. But when our endpoints call a browser-based endpoint, they have to fall back to the default media stack and it is not possible to layer on additional media enhancements, which is why we’d like more control in the browser environment.

In the more immediate time frame, the subject of handling QoS in WebRTC is tricky, and far from standardized. Plus, QoS behavior, like with much of WebRTC, tends to require significant reverse engineering to establish the exact behavior in different scenarios. We’re happy we can provide this capability on behalf of our customers - but we’d like more control over the experience.

What’s next for Twilio?

We’ve talked about a few of them - interoperability with SIP endpoints and PSTN endpoints for example. Of course we’re also working on SFU functionality for large scale video conferences - that should be no surprise to our customers. But we want to provide this capability in such a way that a developer doesn’t have to choose between either peer-to-peer routing or SFU mixed. The solution should intelligently move from one to another as the call topology requires. We also want a solution that scales beyond any existing solutions. And then, well...that’s enough to keep us busy for now Tsahi.

-

The interviews are intended to give different viewpoints than my own – you can read more WebRTC interviews.