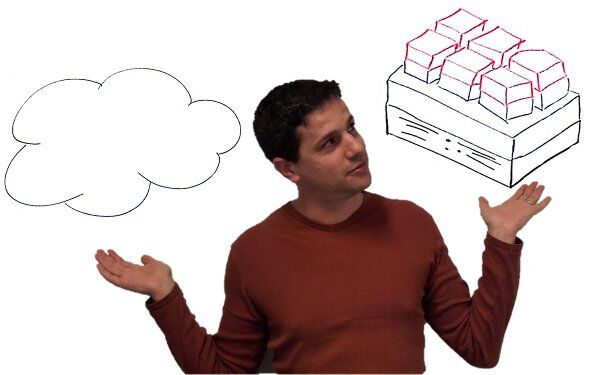

I need your help with this nagging question about the different between cloud and virtualization.

There are two large hypes today in computing:

- Virtualization – essentially, the ability to treat machines as virtual entities and run their workloads on shared physical hardware

- Cloud – can’t even begin to give a specific definition that everyone will agree with…

I had this notion that a prerequisite for cloud is virtualization. It seems a reasonable step: if you want to scale things horizontally, and be able to load balance a large operation, then it is a lot easier to do by virtualizing everything.

And then people start coming up with nagging examples like Google’s services, Facebook and Twitter. All are undoubtedly doing cloud (the thing I refrained from defining), but none use virtualization.

It seems like cloud is essential in almost any type of service (not this blog site mind you – at least not until you bring all your friends, family, acquaintances, neighbors and colleagues to read it on a daily basis).

Anyway, here are some questions I have – I am relying on your collective experience – especially those coming from companies that deploy services (with or without WebRTC):

- How exactly are cloud and virtualization different? Where would you put the single most distinct difference?

- When would you use cloud technologies but skip virtualization?

- In which types of companies would it make sense to use cloud without virtualization? Is it small companies? Large ones? Technology focused companies? Something else?

- Would you say that today any service should run in a cloud that has virtualization unless proven otherwise? Or is it the other way around?

Keep the answers coming – I am appreciative of them already.

Cloud is one of those hyped up words which has completely lost it’s meaning.

HTML5 has the same problem, HTML5 actually is a perticular set of standards, but HTML5 basically now means: all new webstandards

This seems to be normal in the techindustry, that certain words lose their meaning. Maybe it is true in other field as well. Ajax for webapplications was also such a word.

Back to cloud.

Cloud computing used to be a service you could buy which would scale with your needs. If your website became really popular you would pay for more service from for example Amazon, euh.. AWS.

If the week after you don’t need the extra service anymore, you don’t need to pay for it anymore.

So it is : you pay per use.

And supposedly the cloud would give you unlimited scalability, because if you need it you can get it. Obviously if you can’t pay for it, you still can’t get it. 🙂

Virtualization is just an implementation detail for cloud computing. Just think of something like this:

http://techcrunch.com/2012/04/04/canonical-metal-as-a-service-not-quite-as-cool-as-it-sounds/

Is cloud computing the same as the cloud ? I guess cloud computing is Infrastructre as a service (IaaS) ? But you also have Platform-aaS and Software-aaS and other things.

I guess cloud is a ‘Something as a service’ which you pay per use and you can quiet the service anytime you want.

I don’t see how Twitter or Facebook are cloud in the original sense and when someone asks an iPhone user: “do you know where that information is stored ?” “Yes, in the cloud”. Then that probably is also is not the original sense. It just is a hyped up word.

If the iPhone user is paying for a service from some provider to keep the data as is paying per month, per use, then yes, that might be called cloud I guess ?

Maybe a lot of people also think of cloud as something which is “always on”, always available, always connected. If the hardware of one machine at Amazon fails, the website software running on that machine is just started on an other machine.

If you say Facebook has a lot of servers and is always available, even when you don’t want it 😉

Then maybe some people also call that cloud ?

But pay for use does not completely fit the bill either. Some people have ‘private cloud’, larger companies that have a lot of servers on which applications or websites are running which temporarily need more resources. So elasticity, flexibility also applies to cloud.

But if you call every Internet enabled thing cloud, then that is just silly. Then all it is is a hyped up word.

So we have: elasticity/flexibility, always on and pay per use. And a distinction between cloud computing and the general word cloud.

I hope that helps 🙂

I forgot one thing

Public Cloud like the offering from Amazon, DreamHost, HP, Rackspace and others and private cloud (for the larger company that has many services competing for resources) also have an API (and possibly tooling or libraries) for automation.

So the user can easily roll out new and dispose unused of systems on demand, possibly automated.

Lennie,

Thanks for the comments. I am more into the technical part here of what decisions drive people to opt for virtualization and when they do cloud without virtualization – what do they mean by that.

It’s a matter of resources, if you have a steady stream of users and you know you pretty much always a lot of hardware to run your shop. AND you know how to manage dedicated servers (like automatic failover).

In that case it is cheaper to go with dedicated servers and there is less overhead.

The other as someone mentioned below is, that virtualisation is usually shared hosting (you can also get contracts where you pay for that only one virtual server is running on the physical machine).

Shared hosting can add (lots of) latency, so if your application is latency sensitive. You might want to look for certain providers which deliver that specific product. I’m thinking for example of choosing http://joyent.com/ over Amazon.

typo, that should have been: pretty much always NEED

But then virtualization can be used to gain characteristics like migration of a process from one physical machine to another on the fly, fault tolerance to some extent, reuse of machines of my own complex service by bunching several virtual machines on a single physical one when I don’t need all its resources… it is not only about shared hosting.

HA and migrating users from one system to an other to allow for maintenance of a server are totally different topics from virtualisation.

Virtualisation just adds other options. Virtualisation as it is deployed most of the time when a machine fails, your instance is started on an other machine (only if the whole machine dies of course when a certain component fails it might not be apparant and when it is virtualized it might even be harder to find/detect).

I work at a hosting provider and things that need to stay up are all physical boxes with for example virtual IP-addresses. When one box goes down, an other system will take over that IP-address and you can do the same by hand for maintaince.

If you need more garantees, you need systems in front of it which do a loadbalancing like task and failover to others systems when one fails.

It also depends on the application, if you have an application which can easily scale. Like simple webservers if one fails you can just point users at an different usually only a database or session information needs to be shared between webservers.

But something like a database server for example which is just one big bubble of state, probably needs replication and that is a whole different can of worms again.

Tsahi, you’re one of the smartest people on Earth, so I know you’re up to something… Yet I cannot resist playing along! Here’s my answer.

Most people don’t need to know about virtualization, it’s an implementation detail. But almost everyone knows about “Cloud”.

Any Tom, Dick & Harry with a garage full of poorly stacked 486’s (remember the Turbo button?), a mess of wires, overloaded power circuits and a garden hose for a sprinkler system, can now sell a hosted service by marketing it as “Cloud”, because people always conjure up images of state of the art facilities when they hear the word.

In that sense, that single word, “Cloud”, has become one of the most successful, most global, most ubiquitous luxury brand names in history, on par with Ferrari, Rolex, Disney and Starbucks.

Stephane,

Thanks for the kind words – I hope to live up to them moving forward 🙂

Assume I want to deploy a service – doesn’t really matter what. And I want to think large-scale-money-not-an-object thingy. I can go and deploy it on hardware I buy myself, and then either virtualize or “cloudify”, I can outsource parts of it to the likes of Amazon or Joyent, I can PaaS, SaaS or IaaS the hell out of it.

The main debate I have with myself (nothing up my sleeve by the way – at least not that I know of) is where does virtualization fits in and what would “cloud” mean?

Hi Tsahi, not sure if this what you are after, but from our (AddLive.com’s) experience virtualized machines are not ideal for RTC. They suffer from ‘bad neighbor’ syndrome.

We have found that the internal latency within virtualized EC2 to be as high as 7 seconds. Although this is infrequent, the real time communication will totally breakdown, as typically we want the total round trip time to be under 300ms.

However, virtualization does allow easy scale during unexpected peak times. So our solution is to use non-virtualized (or ‘cloud’) boxes for the majority of the data flow, with a virtualized layer to handle unexpected traffic increases.

Hope that helps!

Yap,

That’s the kind of inputs I am after – thanks a bunch!

One question – when you use non-virtualized today:

* Have you built that solution on your own?

* How are you managing it?

* What makes you refer to it as ‘cloud’?

* Have you tried virtualization on it when you control everything – network, machine, OS, etc?

Are you at WebRTC expo? If you are pop into our stand where you can have a chat with Ted our CTO.

We are still researching and are continuously developing our infrastructure so we could also keep you up to date on any improvements if you like.

I struggled to put some light on this last year. We even had a VUC session where we considered “hosted” vs “Cloud” and the role of virtualization in each.

Virtualization is often thought to be when large scale hardware is shared by multiple instances of an OS and client processes, all protected from each other. That’s scaling downward. It’s easy to understand the role of this sort of thing in web hosting, etc.

I’m told that virtualization also scales upward. That is, multiple hardware systems servicing a singular process. I prefer to think of this in terms of application architecture. It’s certainly easy to understand a cluster of servers acting as a single large database or web host.

A lot of this presumes that the processes involved are not dependent upon specific hardware. Add the requirement for something more unique, like TDM interfaces, DSP or GPU-based accelerators, and the application is now more hardware dependent, which make change how the application must be architected.

IMHO, “hosted” is when the resource doesn’t existing on my network but I can know with certainty that it runs on specific hardware at a specific location(s) I know that I can call someone and have them reboot a box to restart things if required. In my realm “hosted” processes often include things that require dedicated, specialty hardware of some sort.

“Cloud” is less deterministic about the identity and location of the physical host, or its specific characteristics. A well architected cloud application may involve many resources at many locations with provisions for fault tolerance between any of its various aspects.

A less well-considered cloud app may be run by a small start-up in one AWS zone for cost reasons, and be subject to the kind of outages that have often made the news.