UPDATE: The OpenVRI site has been closed. Nicholas ended up working for a larger VRS vendor.

A single person's road to change the VRS market.

I had the opportunity to learn a bit on the VRS market in a previous life. VRS stands for "Video Relay Service" – a market for the hearing impaired, mainly in the US, where deaf people can receive a sign language interpretation services to make their daily calls to interact with friends, family and corporations.

It is expensive to operate and also to use on a daily basis. As any other video based calling service, WebRTC can fit nicely into it…

When I first saw OpenVRI (a free VRI solution), it got me interested in the man behind it – Nicholas Buchanan, who single handedly developed this service for his own use and for the benefit of other people who require sign language interpretation for daily calls.

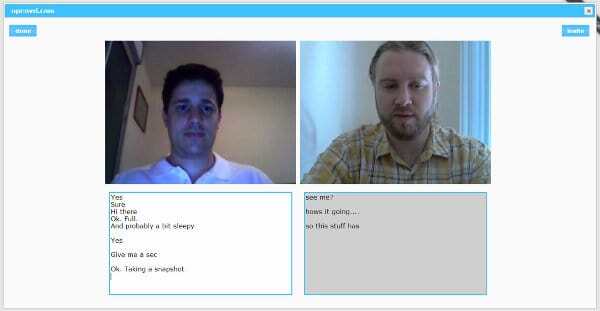

I had to interview him, and learn more on the person and the reasons for his actions more than on the technical aspects of this service. Here's the interview.

What is OpenVRI all about?

For years solutions have come and gone, none too successful because of the challenges in setting up the right environment. OpenVRI utilizes WebRTC as a test of the latest of solutions hoping it will finally solve the difficulties that has hindered many deaf customers and sign interpreting services from using VRI. VRI is what we like to see - Video Remote Interpreting at the lowest cost possible. I have seen numerous solutions such as Polycom and other big companies with standalone solutions but they are all pricey and are counterintuitive to what the local interpreting services needs and can afford.

It costs to have an interpreter on site and VRI has long been the hope that it would reduce the cost having the interpreter drive to the job site, then usually require a minimum of 2 hour job in order to compensate for the trip. VRI allows interpreters to stay home and interpret through the videophone medium. With WebRTC based videophone that we can actually write rapidly, in a matter of weeks, without the need of expertise in the video and audio aspects, is now possible. So, in that, WebRTC offers a pragmatic solution, a private chatroom that is URL based, plugin-free, which is just perfect for an ideal VRI solution. It just takes a browser equipped with WebRTC and share the URL. It could not been simpler. And virtually at no cost. The deaf community finally will have a solution that they’ll be happy to use without feeling the burden of paying for extra necessities to make the communication happen.

Why a free VRI service anyway?

The requirements of a signaling server that I chose was very low, it was as simple as using node.js and node.js is perfect for real time communication. So it just made sense to use that and rent a small server to start with and let it run on its own. Nothing fancy going on. Just a simple JavaScript+CSS+HTML skill to make it happen in matter of weeks.

I am deaf myself so I feel the pains of the deaf community suffering for the costs of making communication happen. I have been a videophone developer for years and I just can’t emphasize more how much WebRTC can help our lives in terms of communication with the hearing world. Videophone software now can be a commodity - on the side, as much as writing a website with a live rep widget to better reach out and help the customer. OpenVRI is based on that principle that we offer a one line script to embed into anyone’s website in order to make it a snap to install and use.

I could of course charge for OpenVRI but I don’t see the point. I believe in the open source movement and I want to live it. The technology behind OpenVRI is open sourced so I don’t see the need to make people pay for it

Being deaf, you decided to tackle WebRTC as a technology. What made you make that choice?

Formerly I was expecting to release OpenVRI as a Flash based system with complicated ACD scripts for connecting to the target interpreter at the other end. Flash also at its core was a plugin so it was not as stable as say, a browser built in function to deal with media devices. Maybe it was my lack of programming skills, but I eventually grew a distaste for plugins trying to communicate with the rest of the browser. As I was battling with Flash, I read about WebRTC for several months (I admit I was skeptical). I finally understood the simplicity of building a URL based private chatroom and be able to share it with the target peers and that was the “eureka” moment.

With the zero cost of running WebRTC and node.js and the short amount of coding to do I figured I could develop a VRI platform in a short time frame.

What challenges are there for you as a deaf person to work with WebRTC?

The audio aspect has been a challenge, as we all can imagine! I was at the mercy of others helping me verify the quality, what works and when it doesn’t. Cross-browser tests and then various webcam and headset devices to try out. It is not something I can really help myself even with hearing aid devices; I just could not be sure of what I was hearing.

The noise management is something I had to spend some time on, even though WebRTC has it built in, it was not working properly and by myself verifying it was next to impossible.

What excites you about working in WebRTC?

The moment Google released VP8 and its WebM project, it was the day I leaped with joy. I am a strong believer in the open source movement and the sharing of ideas and knowledge. I knew I had to get involved in that project somehow and then WebRTC came, I had the feeling inside of me of the urge to do something about it. It is the joy that when working with WebRTC, it is there because a group of people believed it to be free for someone like me to contribute back to the community. Thank you folks for making WebRTC what it is.

What signaling have you decided to integrate on top of WebRTC?

socket.io. It plays well with real time communication.

Backend. What technologies and architecture are you using there?

Node.js was decided as the backend. node.js has all the key functions for real-time communication to occur. The platform doesn’t have any heavy data crunching operations, sql tables, or high traffic as of now so our server is small. Until popular demand is met we’ll probably be fine with node.js for a while. I did ask myself if SIP was needed but we are not going to be reaching out to any other endpoints except ourselves through URL based chatrooms, so SIP, XMPP and such didn’t make sense when compared to the efforts setting up node.js and socket.io.

The chat you've built into OpenVRI. You decided to have multiple message windows - one for each participant. This is different than the usual paradigm of a single chat box for everyone. What made you choose this option?

I saw other solutions with a single chat box for everyone to type in and because our approach is real-time texting, to the strictest sense, every character the user enters, the character is being sent to other end immediately. So we can imagine how cluttered the single text chat area would grow. This came down to the need of having our own chat space so we keep our conversations in separate boxes.

Another reason I decided on a real-time texting is because often with hearing people when I talk with them using Skype or such, they have to sit and wait for me to hit ‘enter’ then they begin reading my comment. And they begin typing back, I pause and wait. Sometimes for minutes nothing is being sent. I thought that was just too slow so I took the quicker approach: a real-time texting of every character sent immediately for a more real time conversation.

Where do you see WebRTC going in 2-5 years?

Definitely not going to see it go out of style. I think a browser based solution is the way to go and that will help further the race for web based paradigm with zero download, plugin free approach. All applications now running on the browser ranging from games, enterprise applications, and then to communications follow this multi-platform paradigm of browser everywhere with HTML, CSS, and Javascript as the language of choice for client applications, just makes sense to me.

I think right now a lot of experimentation is going on with how we can use WebRTC and I see most of its use being an add-on to current systems in the telecommunication world. As the experimentation harmonizes, we can see companies providing a simplified WebRTC toolset such as a widget to insert into web pages. Imagine shopping at a retail online store and a customer rep pops up, wants to talk to you face to face. It’s going to be very easy to do that.

People are going to start seeing more of each other virtually.

If you had one piece of advice for those thinking of adopting WebRTC, what would it be?

Read all the basics - W3C API, tutorials from Google Web Fundamentals, read blogs about WebRTC. I started with the blogs and reading on all opportunities coming from WebRTC and what it can do then decided to move from Flash to WebRTC and I have never been happier to make the switch.

Given the opportunity, what would you change in WebRTC?

I am still relatively new to WebRTC but I would like to have more control to how the ports are being assigned. Right now I don’t think there is an easy way to control that as I have previously been able to back in my SIP days controlling which ports the media channels could go through so the firewalls could be set to allow traffic deterministically. That was one big problem I had with RTMFP, there was just no way I could control that as it randomly picked ports from all given ranges. WebRTC gives a little more control of that with ICE, STUN, and TURN capacities so I think I am fine with that for now.

What’s next for OpenVRI?

I have plans to integrate Google’s voice API so hearing people can opt for talking and translate to text, then the deaf can read it. And vice versa text to speech.

Then video and audio recording; I love how the deaf could watch the interpreter during a classroom lecture then go home and watch it over again and again!

What's next for Nicholas?

Continue to experiment with the latest technologies in the world of web development. Real time communication is my main focus for now and I hope that OpenVRI gains momentum in the deaf and interpreting world.

-

The interviews are intended to give different viewpoints than my own – you can read more WebRTC interviews.