WebRTC isn’t only about guest access or even interoperability. It is about the whole infrastructure and service.

My article last month about guest access, the use of WebRTC for it AND how it is now used for “interoperability” between Microsoft and Cisco had its nice share of feedback and comments. Both on the article and off of it in private conversations. I think there is another trend that needs to be explained, which in a way is a lot more important. This one is about video conferencing hardware being dominated by HTTP and WebRTC. This in turn, is affecting how modern video infrastructure is also shifting towards WebRTC.

Where video conferencing hardware meets WebRTC

Check out this recent session from Kranky Geek last month. Here, Nissar Mahamood from Lifesize explains how WebRTC got integrated into their latest meeting room systems (=hardware), getting it to 4K resolutions.

It is a good session for anyone who is looking at embedded platforms and systems or needs to customize WebRTC for his own needs, using it outside of a web browser.

There are two things in this video that surprised me, for two very different reasons:

- Using GStreamer as the basis of the media engine

- Selecting Node.js as the client application environment

Using GStreamer as the basis of the media engine

I started seeing more and more developers using GStreamer as part of the technology stack they use with WebRTC. On Linux, your best bet with processing media using open source is either ffmpeg or GStreamer. Due to the real time nature of WebRTC, GStreamer is often the more sought after approach. In the past year or so, it also added WebRTC transport, making it a more viable option.

In many cases, the use of GStreamer is for connecting non-WebRTC content to WebRTC or getting content from WebRTC to restream it elsewhere. Lifesize has done something slightly different with it:

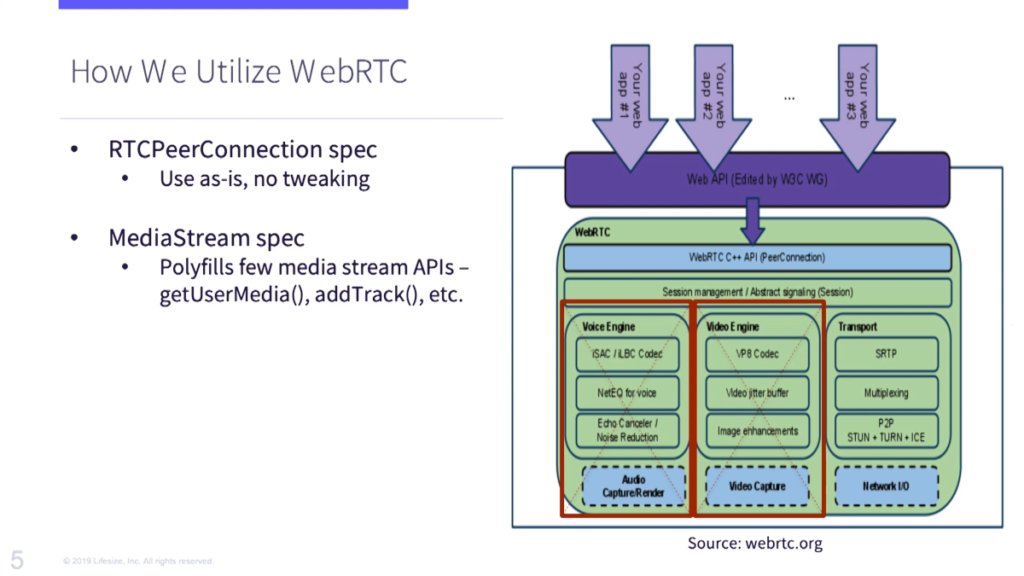

As the illustration above from their Kranky Geek session shows, Lifesize replaced the media engine (voice and video engines) part of WebRTC with their own which is built on top of WebRTC. They don’t use the WebRTC parts of GStreamer, but rather the “original” parts of it and replacing what’s in WebRTC with their own.

It is surprising as many would use WebRTC specifically for its media engine implementations and throw its other components. Why did they take that route? Probably because their existing systems already used GStreamer that is heavily customized or at the very least fine tuned for their needs. It made more sense to keep that investment than to try and reintroduce it into something like WebRTC.

This approach, of taking the WebRTC source code and modifying it to fit a need isn’t an easy route, but it is one that many are taking. More on that later.

Selecting Node.js as the client application environment

We’ve been so focused on development with WebRTC on browsers and mobile, that embedded non-mobile platforms are usually neglected. These have their own set of frameworks when it comes to WebRTC.

The one selected by Lifesize was Node.js:

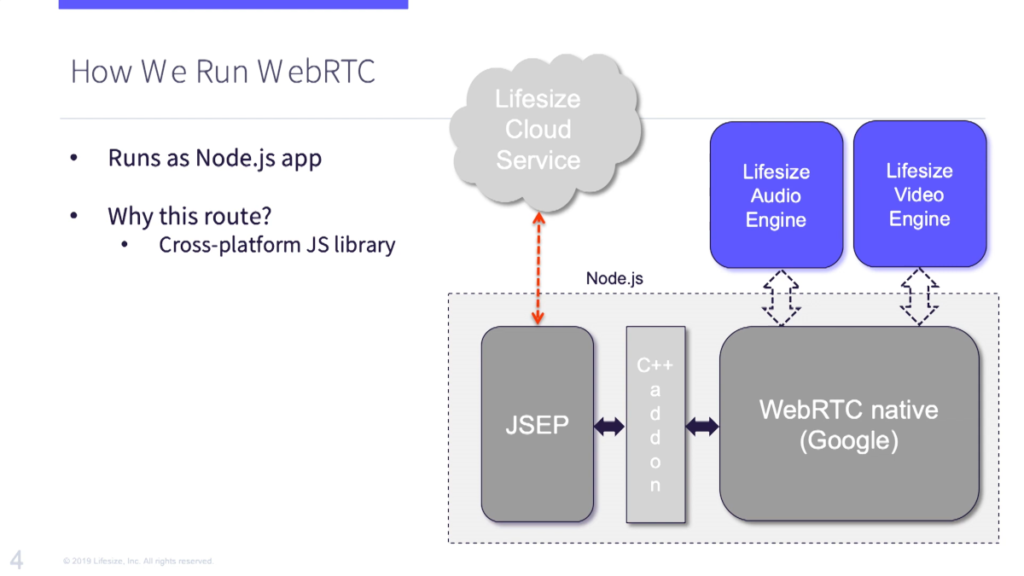

They created a Node.js wrapper that interfaces directly with the WebRTC native C++ “API” with an effort to create the same JS API they get in the browser for WebRTC.

Why? Their meeting room systems now use HTML for its visual rendering and the application logic is driven by JavaScript.

Why JavaScript?

Because of Atwood’s Law 💡

any application that can be written in JavaScript, will eventually be written in JavaScript

Lifesize simply made their application to one that can be written in JavaScript.

This is doubly true when you factor in the need to support web browsers where you have WebRTC with a JS API on top anyways.

The hidden assertion of WebRTC cloud infrastructure

What I like the slide above is the cloud with the wording “Lifesize Cloud Service” in it. The fact that Lifesize is connecting to it via Node.js speaks volumes about where we are and where we’re headed versus where we’re coming from.

A few years ago, this cloud service would have been based on H.323 or SIP signaling.

H.323 is now a deadend (something that is hard for me to say or think - I’ve been “doing” H.323 for the better part of my 13 years at RADVISION). SIP is used everywhere, but somehow I don’t see a bright future for it outside of PSTN connectivity (aka SIP Trunking).

Lifesize may or may not be using SIP here (SIP over WebSocket in this case) - due to the nature of their service. What I like about this is how there is a transition from WebRTC at the edge of the network towards WebRTC as the network itself. Let me try and explain -

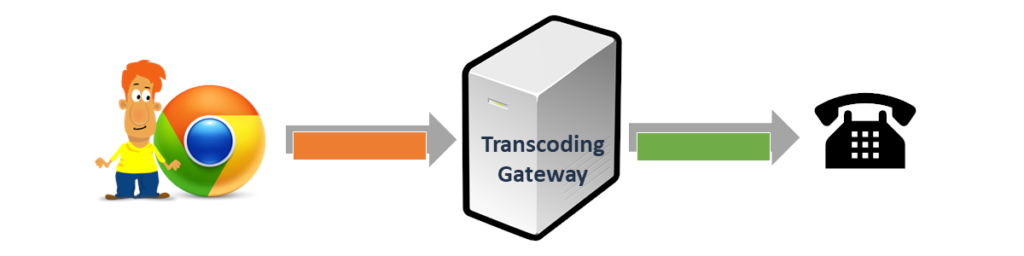

Video conferencing vendors started off looking at WebRTC as a way to get into browsers. Or as a piece of open source code to gut and reuse elsewhere. If one wanted to connect a room system or a software client to a guest (or a user) connecting via WebRTC on a web browser, this would be the approach taken:

(I made up that term transcoding gateway just for this article)

You would interconnect them via a gateway or a media server. Signaling would be translated from one end to the other. Media would be transcoded as well. This, of course, is a waste of processing and bandwidth. It is expensive and wasteful. It doesn’t scale.

With the growing popularity of WebRTC and the increasing use and demand for browser connectivity to video conferences, there was/is no other way than to rethink the infrastructure to make it fit for purpose - have it understand and work with WebRTC not only at the edge.

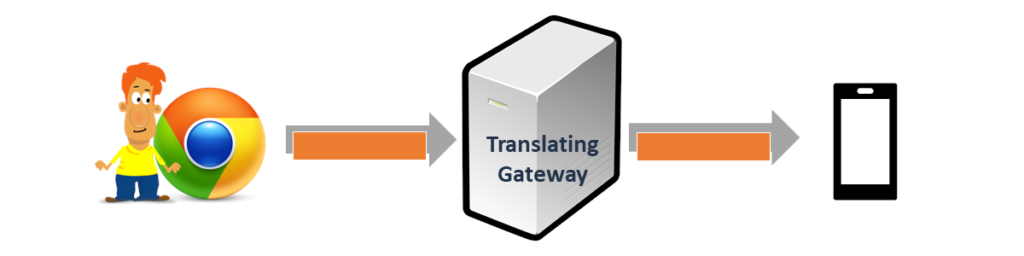

That’s when vendors start trying to fit WebRTC paradigms into their infrastructure:

(guess what? Translating gateway? Also made up just for this article)

Things they do at this stage?

- Add support for SRTP in their infrastructure

- Think of adding BUNDLE and rtcp-mux

- Implement trickle ICE, or at the very least ice-lite

- Try to align the video codecs properly

There are a lot of other minor nuances that need to be added and implemented at this stage. While some of these changes are nagging and painful, others are important. Adding SRTP simply means adding encryption and security - something that is downright mandatory in this day and age.

The illustration also shows where we focused on making the changes in this round - on the devices themselves. We’ve “upgraded” our legacy phone into a smartphone. In reality, the intent here is to make the devices we have in the network WebRTC-aware so they require a lot less translation in the gateway component.

Once a vendor is here, he still has that nagging box in the middle that doesn’t allow direct communication between the browser and the rest of his infrastructure. It is still a pain that needs to be maintained and dealt with. This becomes the last thing to throw out the window.

At this last stage, vendors go “all in” with WebRTC, modifying their equipment and infrastructure to simply communicate with WebRTC directly.

This migration takes place because of three main reasons:

- The need to support web browsers with WebRTC

- The cost of interworking across WebRTC and whatever the rest of the vendor’s infrastructure supports

- The popularity of WebRTC and its vibrant ecosystem marks it as the leading technology moving forward

That third reason is why once a decision to upgrade the infrastructure of a vendor and modernize it takes place, there is a switch towards adopting WebRTC wholeheartedly.

This isn’t just Lifesize

Microsoft took the plunge when adding Skype for Web and went all in with Microsoft Teams.

With their hardware devices for Teams they simply support web technologies in the device, with WebRTC, which means theoretical ability to support any WebRTC infrastructure deployed out there and not only Teams.

Same as the above is what we see with Cisco recently.

BlueJeans and Highfive both live and breath web technologies.

Forgot to mention you? Put a comment below…

There were other good Kranky Geek sessions around this topic this year and last year. Here are a few of them:

- Discord (2018) - how they use WebRTC and the changes made to it to fit their needs. In their case, that was very large audio conferences

- Microsoft (2018) - an overview of WebRTC for UWP and Hololens

- PION (2019) - an alternative WebRTC stack written in Go, and using WASM while at it

- RingCentral (2019) - server apps with Node.js+WebRTC

- Phantom Auto (2019) - replacing the video encoder with an external hardware one running on an NVIDIA GPU

The winning video conferencing hardware software stack

Here’s what seems to be the winning software stack that gets shoved under the hood of video conferencing hardware these days. It comes in two shapes and sizes:

Linux

- Linux as the underlying operating systems

- HTML/JS as the visualization layer (Node.js, Chromium or WebKit as its baseline)

- WebRTC embedded in there as part of the HTML implementation

This gives a vendor a hardware platform where web development is enabled.

Android

- Android as the underlying operating system

- Android app used to implement the device UI and application logic

- WebRTC embedded natively as part of the app

This diverts from the web development approach a bit (while it does allow for it). That said, it opens up room for third party applications to be developed and delivered alongside the main interface.

Linux or Android, which one will it be? Depends on what your requirements are.

A word about Zoom in this context

Why isn’t Zoom using WebRTC properly?

I don’t know. But I can make an educated guess.

It all relates to my previous analysis of Zoom and WebRTC.

Zoom were stuck with the guest access paradigm, trying to take the first step was too expensive for them for some reason. Placing that interworking element to connect their infrastructure to web enabled Zoom clients didn’t scale well with pure WebRTC. It required video transcoding and probably a few more hurdles.

At their size, with their business model and with the amount of guest access use they see with the Zoom client on PCs, it just didn’t scale economically. So they took the WASM route that they are following today.

It got them on browsers, with limited quality, but workable. It got them an understanding on WASM and video processing in WASM that not many companies have today.

And it put them on an intersection in how they operate in the future.

Would they:

- Switch towards WebRTC, as most of their competitors have; or

- Continue with WASM, waiting for WebCodecs and WebTransport to progress to a point where they are clearly defined and available in web browsers

If I were the CTO of Zoom, I am not sure which of these routes I’d pick at this point in time. Not an easy decision to make, with a lot to gain and lose in each approach.

Need help figuring this out?

This whole domain is challenging. Getting WebRTC to work on devices, around devices, in new or existing infrastructure. Deciding how to define and build a hardware solution.

Contact me if you need help figuring this out.