There are different ways to use WebRTC. Zoom is using WebRTC, just not in the most common way possible today.

Zoom seems to be an interesting topic when it comes to WebRTC. I’ve written about them two times recently (and a bonus one from webrtcHacks:

- When Jitsi played with Zoom vs Jitsi in bandwidth limiting

- That in turn led to webrtcHacks looking at the browser implementation of Zoom

- Just two months back we had the security vulnerability in Zoom

That in itself begged the question where WebRTC starts and where it ends, since Zoom uses getUserMedia to access the media to begin with.

What was found lately is even more interesting:

Nils (Mozilla) noticed that Zoom is using WebRTC’s data channel. Which led webrtcHacks to update that Zoom article.

Interesting times 🙂

What does “use WebRTC” mean?

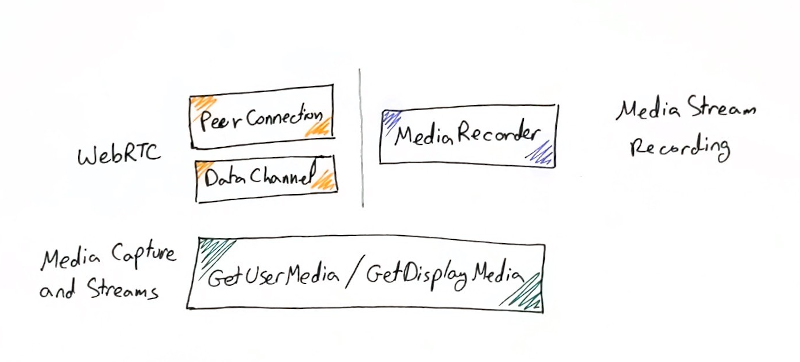

If you go by the specification components in W3C, then the split looks something like this:

From the W3C specifications standpoint, WebRTC is support for Peer Connection and the Data Channel. This encompasses in it other elements/components such as getStats, SDP negotiation, ICE negotiation, etc.

But at its core, WebRTC is about sending data in real time in peer-to-peer fashion across browsers. Be it voice, video or arbitrary data.

getUserMedia and getDisplayMedia have their own specification - Media Capture and Streams. This is what Zoom has been using out of WebRTC. It allows browsers access to cameras, microphones and the screen itself. These are used for things that have nothing to do with communications - like MailChimp or Whatsapp taking a snapshot for a long time now. Others are doing the same as well.

Then there’s the MediaRecorder component, which is defined in MediaStream Recording. Its use? To record media locally. Dubb and Loom use it for example.

Is MediaStream Recording WebRTC? Is Media Capture and Streams WebRTC?

I like taking an encompassing view here and consider them part of what WebRTC is in its essence when used in a browser.

Zoom’s route to WebRTC

Back to Zoom.

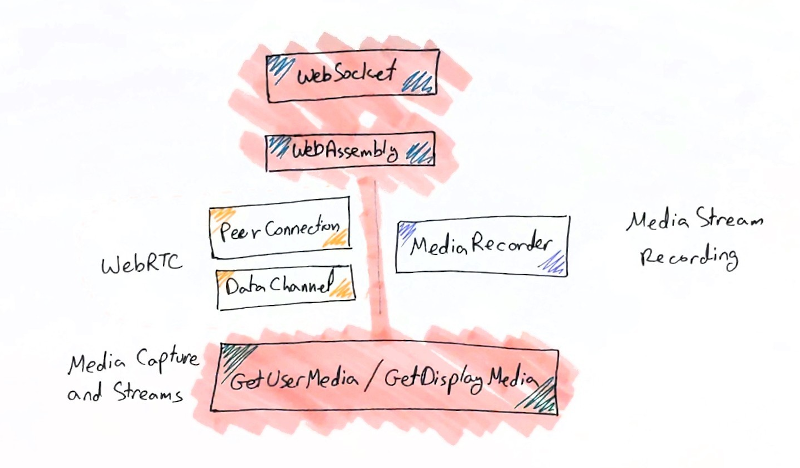

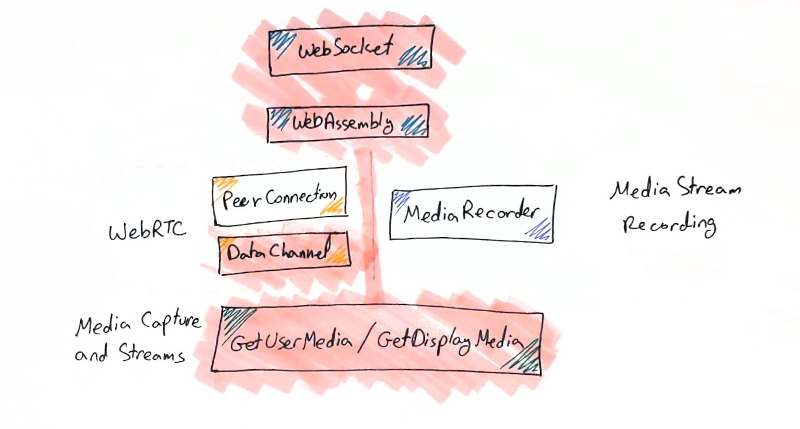

Zoom started by using only getUserMedia. This allowed them access to other browser technologies such as WebAssembly. They got their real time media processing somewhere else.

The next step is what Nils just bumped into - Zoom decided that streaming the media over a WebSocket is nice but not that efficient. Since it ends up over TCP, the performance and media quality is subpar once packet losses kick in. That’s because TCP starts retransmitting the media when it is already too late for a real time task like video calling to use it, ending up with even more congestion and more packet losses.

What is a company to do at such problem? Find a non-reliable connection to send their data on. There are two alternatives today to do that in web browsers:

- WebRTC’s data channel (which uses SCTP today)

- QUIC (HTTP/3), which is still a bit too new

Zoom decided on WebRTC’s data channel in its current SCTP implementation. They haven’t gone for the Google Chrome experiment of a QUIC data channel (which should be rather “safe” considering Google Stadia is said to be using it). And they haven’t decided to use HTTP/3, which I find as a bit odd.

The end result? Zoom is using WebRTC. Somewhat. With a data channel. To handle live video streams, with their previous WebSocket architecture as fallback. And not the peer connection itself. It is really cool, but… don’t try this at home.

Is this the end of the road for WebRTC in Zoom?

I don’t think so.

They still have the installation friction and now all them pesky security experts breathing down their necks looking for vulnerabilities. It won’t hurt their valuation or their revenue, but it will eat into management’s attention.

And frankly? Zoom on a data channel will still be subpar, since doing everything in WebAssembly isn’t optimized enough. At some point, Zoom will need to throw the towel and join the WebRTC game.

Why?

Because of either VP9 or AV1. Whichever ends up being the breaking point for Zoom.

What will be the next step for Zoom’s adoption of WebRTC?

Zoom has two main things working for it today, as far as I can see:

- It just works

- Quality is great

Both are user/market perception more than they are an objective reality (if there even is such a thing).

1. It just works

It just works is about simplicity. It is the reason Zoom started with using GetUserMedia and later the data channel. Without it, guest access to Zoom would mandate installing their app. At a time when all of their competitors require no installation, that’s a problem. The problem is that this small friction that is left means that “it just works” is no longer a Zoom advantage. It becomes their hindrance.

2. Quality is great

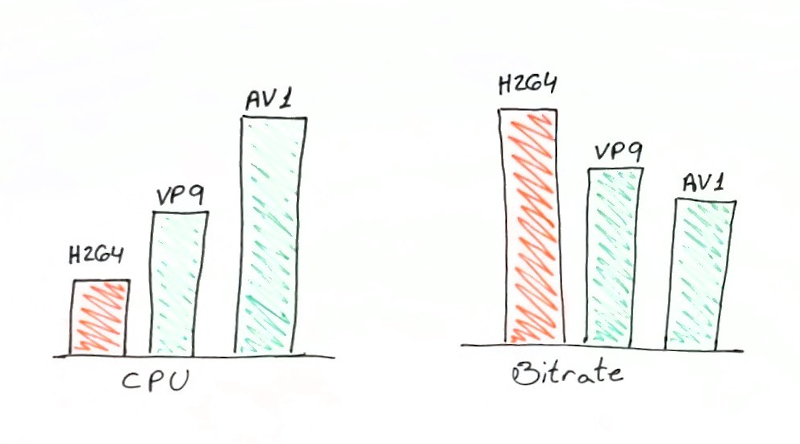

Zoom uses H.264, at least from the analysis done by webrtcHacks (based on packet header inspection).

Since WebRTC has H.264 support, my assumption is that Zoom’s H.264 implementation is proprietary or at the very least, not compliant with the WebRTC one. They might have their own H.264 implementation which they like, value and can’t live without - or at least can’t replace in a single day.

At some point, that implementation is going to lose its luster and its advantages, and that day is rather close now.

H.264 is computationally simpler than VP9 and AV1 - a good thing. But at the same time, VP9 and AV1 offer better quality than H.264 at the same bitrate.

When Zoom’s competitors migrate to using VP9 or AV1, what is Zoom to do?

It can probably adopt VP9 or go for HEVC. It might even decide to use AV1 when the time comes.

But what if it does that without supporting WebRTC? Would running an implementation of a video codec twice or three times as complex as H.264 in WebAssembly make sense? Will it be able to compete against hardware implementations or optimized software implementations that will be found at that point in web browsers?

Without relying on WebRTC, Zoom will be impacted severely in its web browser implementation, and will need to stick to installing an app. At some point, this will no longer be acceptable.

If I were Zoom, I’d start working on a migration plan towards WebRTC. One lasting at least 2-3 years. It is going to be long, complicated, painful and necessary.

Microsoft has taken that route with Skype. Cisco did the same with WebEx.

Both Microsoft and Cisco are probably mostly there but not there yet.

Zoom should start that route.

The end of proprietary communications

In a way, this marks the end of proprietary communications. At least for the coming 5-10 years.

It is funny how things flip.

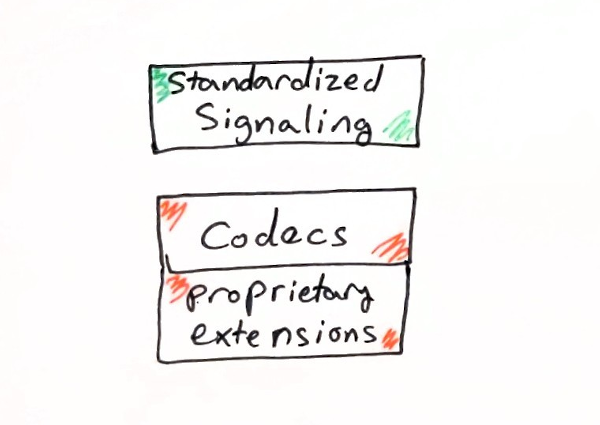

The market used to look like this:

Companies standardized on signaling, placed acceptable standardized codecs. And then pushed proprietary non-standard improvements to their codecs.

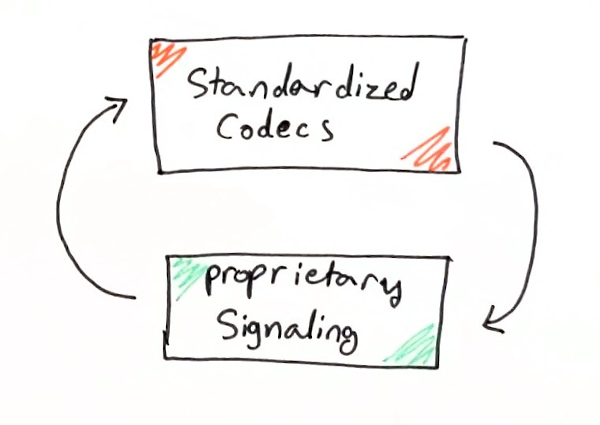

And now it looks like this:

Companies standardize on codecs, using whatever WebRTC has available (and complaining about it), placing their own proprietary signaling and infrastructure to make things work well.

In that same challenge, you’ll find additional vendors:

Agora.io, who has their own proprietary codecs, claiming superior error resiliency. They just joined the AOMedia, becoming part of the companies behind the AV1 video codec.

Dolby, who has their own proprietary voice codec, offering a 3D spatial experience. It works great, but limited when it comes to the browser environment.

As WebRTC democratized communications it also killed a lot of what proprietary optimizations in the codec level can do to assist in gaining a competitive advantage.

It isn’t that better codecs don’t exist. It is that using them has an impossibly high limitation of not being able to be used inside browsers - and that’s where everyone is these days.