Here's how you handle multipoint for small groups using WebRTC.

This is the third post dealing with multipoint:

- Introduction

- Broadcast

- Small groups (this post)

- Large groups

-

Let's first define small groups, and place the magical number 4 on small groups. Why? Because it's a nice rounded number for a geek like me.

Running a conference with multiple participants isn't easy – I can say that from both participating in quite a few of those and from implementing them. When handling multiple endpoints in a conference, there are usually two ways of implementation available if you are ditching the idea of a central server:

- Someone becomes the server to all the rest:

- All endpoints send their media to that central endpoint

- That central endpoint mixes all inputs and sends out a single media stream for all

- Each to his own

- All endpoints send their media to all other endpoints

- All endpoints now need to make sense of all incoming media streams

When it came to traditional VoIP systems, the preference was usually the first option. Fortunately it isn't that easy to implement (i.e. – impossible as far as I can tell) with WebRTC, which leaves us with the second option.

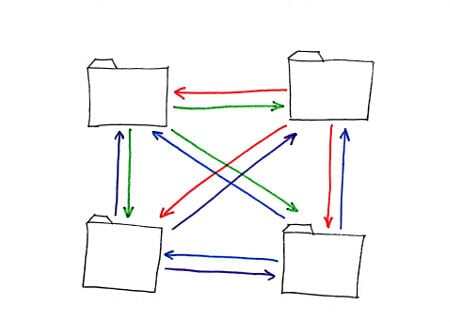

Each to his own, with our magical number 4 will look something like this:

Some assumptions before we continue:

- Each participant sends the exact same stream to all other participants.

- Each participant sends a resolution that is acceptable – no need to scale or process the video on the receiving end other than displaying it

You might notice that these assumptions should make our lives easier…

Let's analyze what this requires from us:

- Bandwidth. Reasonable amount…

- If all participants need to send out 500Kbps for their video, then we are at 1.5Mbps per sender which gets us to 6Mbps for the whole conference.

- If we had a centralized MCU, then we would be at about the same ballpartk: 500Kbps towards the MCU from each participant, and then probably 1Mbps or more back to each participant.

- Going to any larger number of participants will mess the calculations here, making a centralized server consume less bandwidth overall.

- Decoding multiple streams

- There's this nasty need now to decode multiple video streams

- I bet you that there is no mobile chipset today that can decode H.264 by hardware multiple video streams

- And if there is, then I bet you that the driver developed and used for it can't

- And if there is, then I bet you that the OS on top of it doesn't provide access to that level

- And if there is, then it is probably a single device. The other 100% of the market has no access to such capabilities

- So we're down to software video decoding, which is fine, as we are talking VP8 for now

- But then it requires the browsers to support it. And some of them won't. probably most of them won't

- CPU and rendering

- There's a need to deal with the incoming video data from multiple sources, which means aligning the inputs, taking care of the different latencies of the different streams, etc.

- Non-trivial stuff that can be skipped but will affect quality

- You can assume that the CPU works harder for 4 video streams of 500Kbps than it does for a single video stream of 2Mbps

- Geographic location

- We are treating multiple locations, where each needs to communicate directly with all others

- A cloud based solution might have multiple locations with faster/better networks between its regional servers

- This can lead to a better performing solution even with the additional lag of the server

When I started this post, I actually wanted to show how multipoint can be done on the client side in WebRTC, but now I don't think that can work that well…

It might get there, but I wouldn't count on it anytime soon. Prepare for a server side solution…

-

If you know of anyone who does such magic well using WebRTC - send him my way - I REALLY want to understand how he made it happen.