How many WebRTC RTCPeerConnection objects should we be aiming for?

This is something that bothered me in recent weeks during some analysis we've done for a specific customer at testRTC.

That customer built a service and wanted to get a clear answer to the question "how many users in parallel can fit into a single session?"

To get the answer, we had to first help him stabilize his service, which meant digging deeper into the statistics. That was a great opportunity to write an article, which is something I meant to do a few weeks from now. A recent question on Stack Overflow compelled me to do so somewhat earlier than expected - Maximum number of RTCPeerConnection:

I know web browsers have a limit on the amount of simultaneous http requests etc. But is there also a limit on the amount of open RTCPeerConnection's a web page can have?

And somewhat related: RTCPeerConnection allows to send multiple streams over 1 connection. What would be the trade-offs between combining multiple streams in 1 connection or setting up multiple connections (e.g. 1 for each stream)?

The answer I wrote there, slightly modified is this one:

Not sure about the limit. It was around 256, though I heard it was increased. If you try to open up such peer connections in a tight loop - Chrome will crash. You should also not assume the same limit on all browsers anyway.

Multiple RTCPeerConnection objects are great:

- They are easy to add and remove, so offer a higher degree of flexibility when joining or leaving a group call

They can be connected to different destinationsThat said, they have their own challenges and overheads:

- Each RTCPeerConnection carries its own NAT configuration - so STUN and TURN bindings and traffic takes place in parallel across RTCPeerConnection objects even if they get connected to the same entity (an SFU for example). This overhead is one of local resources like memory and CPU as well as network traffic (not huge overhead, but it is there to deal with)

- They clutter your webrtc-internals view on Chrome with multiple tabs (a matter of taste), and SSRC might have the same values between them, making them a bit harder to trace and debug (again, a matter of taste)

A single RTCPeerConnection object suffers from having to renegotiate it all whenever someone needs to be added to the list (or removed).

I'd like to take a step further here in the explanation and show a bit of the analysis. To that end, I am going to use the following:

- testRTC - the service I'll use to collect the information, visualize and analyze it

- Tokbox' Opentok demo - Tokbox demo, running a multiparty video call, and using a single RTCPeerConnection per user

- Jitsi meet demo/service - Jitsi Videobridge service, running a multiparty video, and using a shared RTCPeerConnection for all users

But first things first. What's the relationship between these multiparty video services and RTCPeerConnection count?

WebRTC RTCPeerConnection and a multiparty video service

While the question on Stack Overflow can relate to many issues (such as P2P CDN technology), the context I want to look at it here is video conferencing that uses the SFU model.

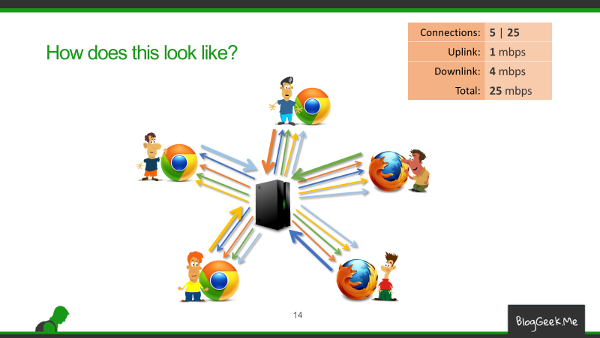

The illustration above shows a video conferencing between 5 participants. I've "taken the liberty" of picking it up from my Advanced WebRTC Architecture Course.

What happens here is that each participant in the session is sending a single media stream and receiving 4 media streams for the other participants. These media streams all get routed through the SFU - the box in the middle.

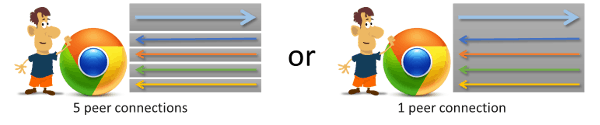

So. Should the SFU box create 4 RTCPeerConnection objects in front of each participant, each such object holding the media of one of the other participants, or should it just cram all media streams into a single RTCPeerConnection in front of each participant?

Let's start from the end: both options will work just fine. But each has its advantages and shortcomings.

Opentok: RTCPeerConnection per user

If you are following the series of articles Fippo wrote with me about how to read webrtc-internals, then you should know a thing or two about its analysis.

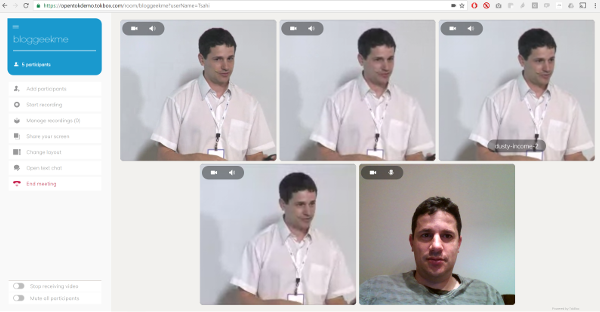

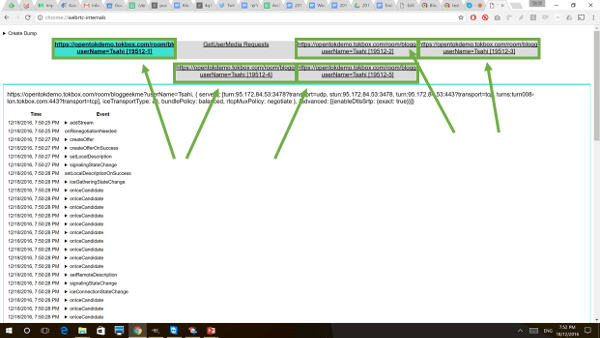

Here's how that session looks like when I join on my own and get testRTC to add the 4 additional participants into the room:

Here's a quick screenshot of the webrtc-internals tab when used in a 5-way video call on the Opentok demo:

One thing that should pop up by now (especially with them green squares I've added) - TokBox' Opentok uses a strategy of one RTCPeerConnection per user.

One of these tabs in the green squares is the outgoing media streams from my own browser while the other four are incoming media streams from the testRTC browser probes that are aggregated and routed through the TokBox SFU.

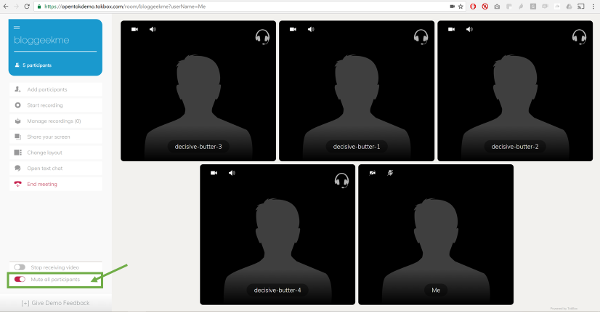

To understand the effect of having open RTCPeerConnections that aren't used, I've ran the same test scenario again, but this time, I had all participants mute their outgoing media streams. This is how the session looked like:

To achieve that with the Opentok demo, I had to use a combination of the onscreen mute audio button and having all participants mute their video when they join. So I added the following lines to the testRTC script - practically clicking on the relevant video mute button on the UI:

After this most engaging session, I looked at the webrtc-internals dump that testRTC collected for one of the participants.

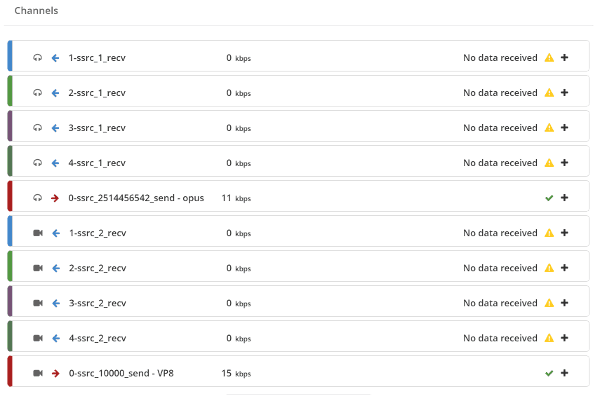

Let's start with what testRTC has to offer immediately by looking at the high level graphs of one of the probes that participated in this session:

- There is no incoming data on the channels

- There is some out going media, though quite low when it comes to bitrate

What we will be doing, is ignore the outgoing media and focus on the incoming one only. Remember - this is Opentok, so we have 5 peer connections here: 1 outgoing, 4 incoming.

A few things to note about Opentok:

- Opentok uses BUNDLE and rtcp-mux, so the audio and video share the same connection. This is rather typical of WebRTC services

- Opentok "randomly" picks SSRC values to be numbered 1, 2, ... - probably to make it easy to debug

- Since each stream goes on a different peer connection, there will be one Conn-audio-1-0 in each session - the differences between them will be the indexed SSRC values

For this test run that I did, I had "Conn-audio-1-0 (connection 363-1)" up to "Conn-audio-1-0 (connection 363-5)". The first one is the sender and the rest are our 4 receivers. Since we are interested here in what happens in a muted peer connection, we will look into "Conn-audio-1-0 (connection 363-2)". You can assume the rest are practically the same.

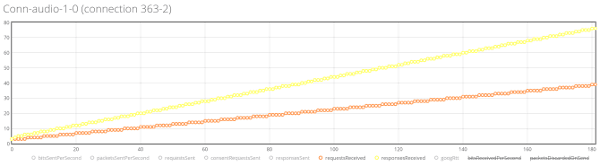

Here's what the testRTC advanced graphs had to show for it:

I removed some of the information to show these two lines - the yellow one showing responsesReceived and the orange one showing requestsReceived. These are STUN related messages. On a peer connection where there's no real incoming media of any type. That's almost 120 incoming STUN related messages in total for a span of 3 minutes. As we have 4 such peer connections that are receive only and silent - we get to roughly 480 incoming STUN related messages for the 3 minutes of this session - 160 incoming messages a minute - 2-3 incoming messages a second. Multiply the number by 2 so we include also the outgoing STUN messages and you get this nice picture.

There's an overhead for a peer connection. It comes in the form of keeping that peer connection open and running for a rainy day. And that is costing us:

- Network

- Some small amount of bitrate for STUN messages

- Maybe some RTCP messages going back and forth for reporting purposes - I wasn't able to see them in this streams, but I bet you'd find them with Wireshark (I just personally hate using that tool. Never liked it)

- This means we pay extra on the network for maintenance instead of using it for our media

- Processing

- That's CPU and memory

- We need to somewhere maintain that information in memory and then work with it at all times

- Not much, but it adds up the larger the session is going to be

Now, this overhead is low. 2-3 incoming messages a second is something we shouldn't fret about when we get around 50 incoming audio packets a second. But it can add up. I got to notice this when a customer at testRTC wanted to have 50 or more peer connections with only a few of them active (the rest muted). It got kinda crowded. Oh - and it crashed Chrome quite a lot.

Jitsi Videobridge: Shared RTCPeerConnection

Now that we know how a 5-way video call looks like on Opentok, let's see how it looks like with the Jitsi Videobridge.

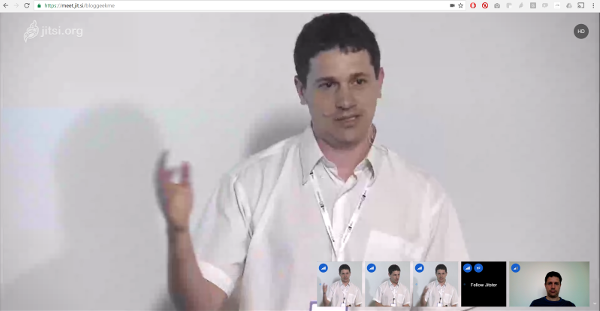

For this, I again "hired" the help of testRTC and got a simple test script to bring 4 additional browsers into a Jitsi meeting room that I joined with my own laptop. The layout is somewhat different and resembles the Google Hangouts layout more:

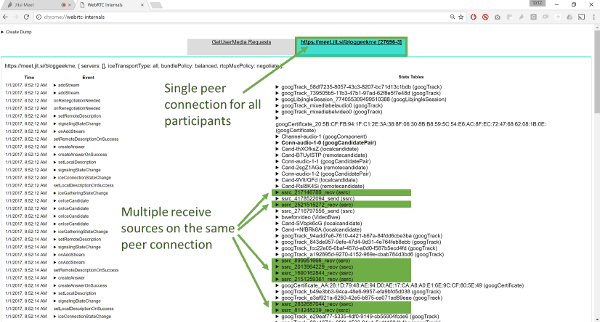

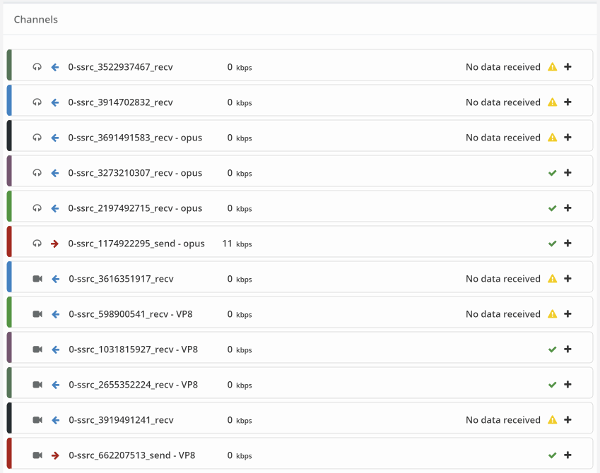

What we are interested here is actually the peer connections. Here's what we get in webrtc-internals:

A single peer connection for all incoming media channels.

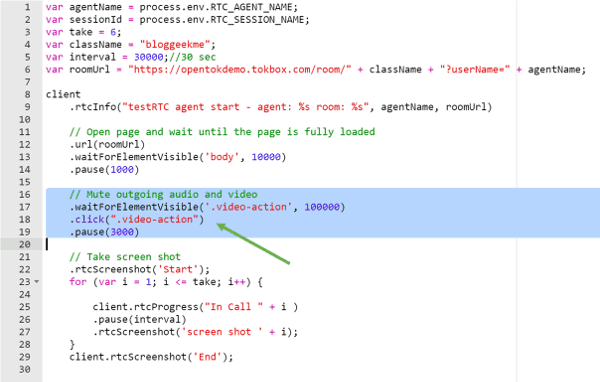

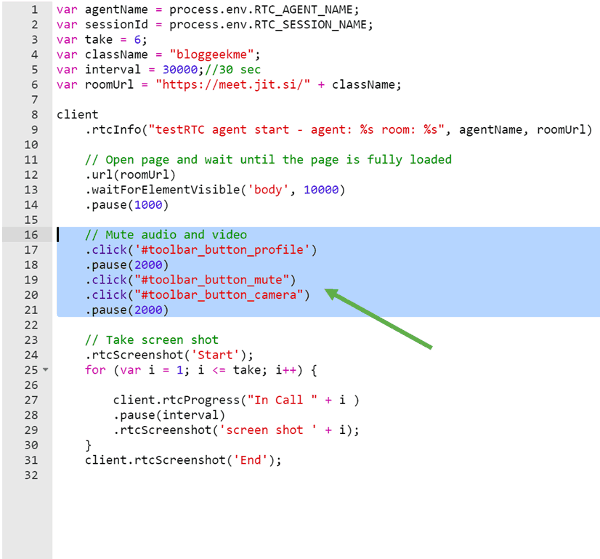

And again, as with the TokBox option - I'll mute the video. For that purpose, I'll need to get the participants to mute their media "voluntarily", which is easy to achieve by a change in the testRTC script:

What I did was just was instruct each of my automated testRTC friends that are joining Jitsi to immediately mute their camera and microphone by clicking the relevant on-screen buttons based on their HTML id tags (#toolbar_button_mute and #toolbar_button_camera), causing them to send no media over the network towards the Jitsi Videobridge.

To some extent, we ended up with the same boring user experience as we did with the Opentok demo: a 5-way video call with everyone muted and no one sending any media on the network.

Let's see if we can notice some differences by diving into the webrtc-internals data.

A few things we can see here:

- Jitsi Videobridge has 5 incoming video and audio channels instead of 4. Jitsi reserves and pre-opens an extra channel for future use of screen sharing

- Bitrates are 0, so all is quiet and muted

- Remeber that all channels here share a single peer connection

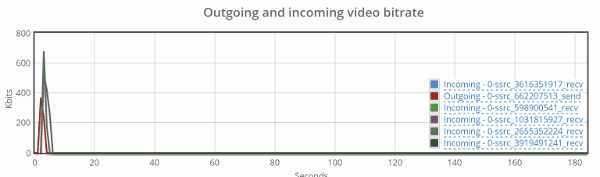

To make sure we've handled this properly, here's a view of the video channels' bitrate values:

There's the obvious initial spike - that's the time it took us to mute the channels at the beginning of the session. Other than that, it is all quiet.

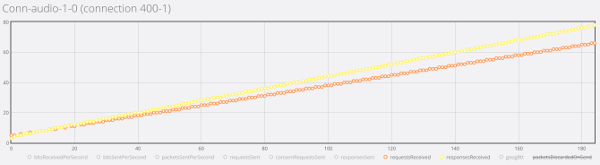

Now here's the thing - when we look at the active connection, it doesn't look much different than the ones we've seen in Opentok:

We end up with 140 incoming messages for the span of 3 minutes - but we don't multiply it by 4 or 5. This happens once for ALL media channels.

Shared or per user RCTPeerConnection?

This is a tough question.

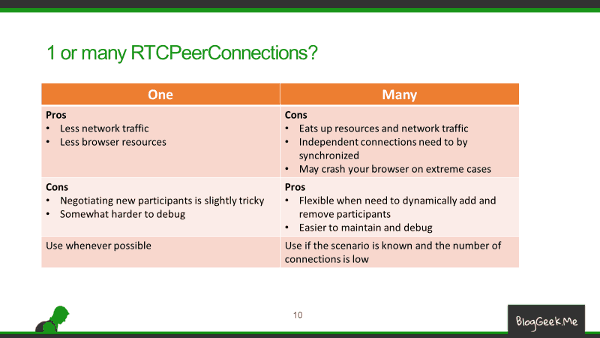

A single RTCPeerConnection means less overhead on the network and the browser resources. But it has its drawbacks. When someone needs to join or leave, there's a need to somehow renegotiate the session - for everyone. And there's also the extra complexity of writing the code itself and debugging it.

With multiple RTCPeerConnection we've got a lot more flexibility, since the sessions are now independent - each encapsulated in its own RTCPeerConnection. On the other hand, there's this overhead we're incurring.

Here's a quick table to summarize the differences:

What's Next?

Here's what we did:

- We selected two seemingly "identical" services

- The free Jitsi Videobridge service and the Opektok demo

- We focused on doing a 5-way video session - the same one in both

- We searched for differences: Opentok had 5 RTCPeerConnections whereas Jitsi had 1 RTCPeerConnection

- We then used testRTC to define the test scripts and run our scenario

- Have 4 testRTC browser probes join the session

- Have them mute themselves

- Have me join as another participant from my own laptop into the session

- Run the scenario and collect the data

- Looked into the statistics to see what happens

- Saw the overhead of the peer connection

I have only scratched the surface here: There are other issues at play - creating a RTCPeerConnection is a traumatic event. When I grew up, I was told connecting TCP is hellish due to its 3-way handshake. RTCPeerConnection is a lot more time consuming and energy consuming than a TCP 3-way handshake and it involves additional players (STUN and TURN servers).