ChatGPT is changing computing and as an extension how we interact with machines. Here’s how it is going to affect WebRTC.

ChatGPT became the service with the highest growth rate of any internet application, reaching 100 million active users within the first two months of its existence. A few are using it daily. Others are experimenting with it. Many have heard about it. All of us will be affected by it in one way or another.

I’ve been trying to figure out what exactly does a “ChatGPT WebRTC” duo means - or in other words - what does ChatGPT means for those of us working with and on WebRTC.

Here are my thoughts so far.

Crash course on ChatGPT

Let’s start with a quick look at what ChatGPT really is (in layman terms, with a lot of hand waving, and probably more than a few mistakes along the way).

BI, AI and Generative AI

I’ll start with a few slides I cobbled up for a presentation I did for a group of friends who wanted to understand this.

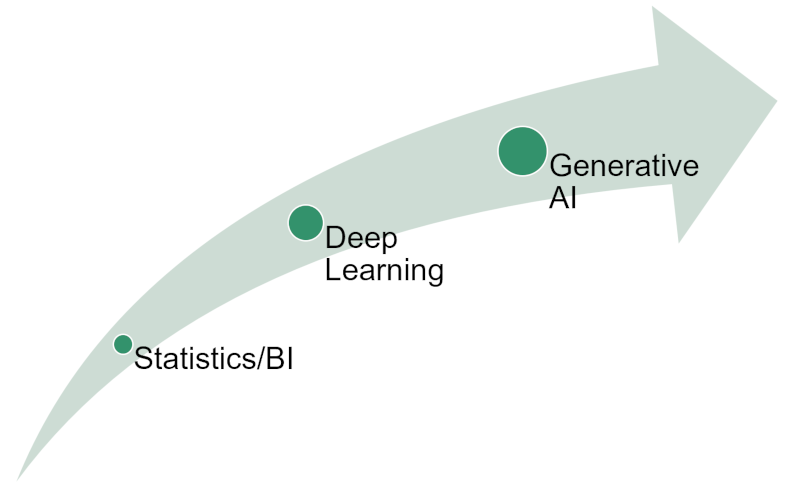

ChatGPT is a product/service that makes use of machine learning. Machine learning is something that has been marketed a lot as AI - Artificial Intelligence. If you look at how this field has evolved, it would be something like the below:

We started with simple statistics - take a few numbers, sum them up, divide by their count and you get an average. You complicate that a bit with weighted average. Add a bit more statistics on top of it, collect more data points and cobble up a nice BI (Business Intelligence) system.

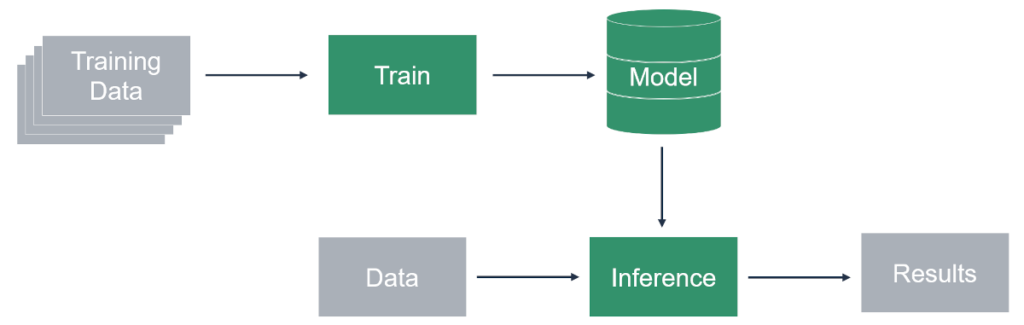

At some point, we started looking at deep learning:

Here, we train a model by using a lot of data points, to a point that the model can infer things about new data given to it. Things like “do you see a dog in this picture?” or “what is the text being said in this audio recording?”.

Here, a lot of 3 letter acronyms are used like HMM, ANN, CNN, RNN, GNN…

What deep learning did in the past decade or two was enable machines to describe things - be able to identify objects in images and videos, convert speech to text, etc.

It made it the ultimate classifier, improving the way we search and catalog things.

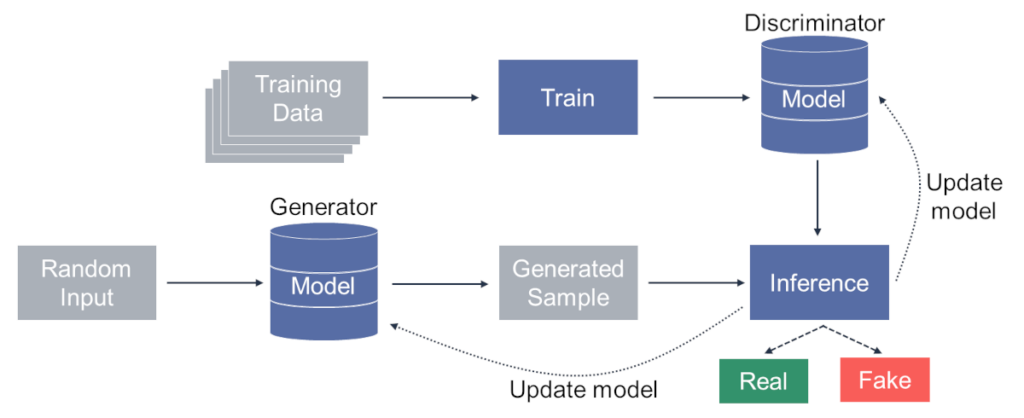

And then came a new field of solutions in the form of Generative AI. Here, machine learning is used to generate new data, as opposed to classifying existing data:

Here what we’re doing is creating a random input vector, pushing it into a generator model. The generator model creates a sample for us - something that *should* result in the type of thing we want created (say a picture of a dog). That sample that was generated is then passed to the “traditional” inference model that checks if this is indeed what we wanted to generate. If it isn’t, we iteratively try to fine tune it until we get to a result that is “real”.

This is time consuming and resource intensive - but it works rather well for many use cases (like some of the images on this site’s articles that are now generated with the help of Midjourney).

So…

- We started with averages and statistics

- Moved to “deep learning”, which is just hard for us to explain how the algorithms got to the results they did (it isn’t based on simple rules any longer)

- And we then got to a point where AI generates new data

The stellar rise of ChatGPT

The thing is that all this thing I just explained wouldn’t be interesting without ChatGPT - a service that came to our lives only recently, becoming the hottest thing out there:

ChatGPT is based on LLMs - Large Language Models - and it is fast becoming the hottest thing around. No other service grew as fast as ChatGPT, which is why every business in the world now is trying to figure out if and how ChatGPT will fit into their world and services.

Why ChatGPT and WebRTC are like oil and water

So it begged the question: what can you do with ChatGPT and WebRTC?

Problem is, ChatGPT and WebRTC are like oil and water - they don’t mix that well.

ChatGPT generates data whereas WebRTC enables people to communicate with each other. The “generation” part in WebRTC is taken care of by the humans that interact mostly with each other on it.

On one hand, this makes ChatGPT kinda useless for WebRTC - or at least not that obvious to use for it.

But on the other hand, if someone succeeds to crack this one up properly - that someone will have an innovative and unique thing.

What have people done with ChatGPT and WebRTC so far?

It is interesting to see what people and companies have done with ChatGPT and WebRTC in the last couple of months. Here are a few things that I’ve noticed:

- Arin Sime decided to ask ChatGPT about the future of WebRTC. Nice, but not really something that gets WebRTC and ChatGPT more integrated with one another

- LiveKit shows how to connect ChatGPT to a live WebRTC video call. The result is mindbogglingly good - practically giving voice to ChatGPT

- Twilio showcases a similar thing - connecting ChatGPT to their Programmable Voice service. Slightly less compelling but just as practical

- Then there’s the whole transcription space, where you see ChatGPT and its ilk used for the generation of summaries and action items from the meeting transcription

In LiveKit’s and Twilio’s examples, the concept is to use the audio source from humans as part of prompts for ChatGPT after converting them using Speech to Text and then converting the ChatGPT response using Text to Speech and pass it back to the humans in the conversation.

Broadening the scope: Generative AI

ChatGPT is one of many generative AI services. Its focus is on text. Other generative AI solutions deal with images or sound or video or practically any other data that needs to be generated.

I have been using MidJourney for the past several months to help me with the creation of many images in this blog.

Today it seems that in any field where new data or information needs to be created, a generative AI algorithm can be a good place to investigate. And in marketing-speak - AI is overused and a new overhyped term was needed to explain what innovation and cutting edge is - so the word “generative” was added to AI for that purpose.

Fitting Generative AI to the world of RTC

How does one go about connecting generative AI technologies with communications then? The answer to this question isn’t an obvious or simple one. From what I’ve seen, there are 3 main areas where you can make use of generative AI with WebRTC (or just RTC):

- Conversations and bots

- Media compression

- Media processing

Here’s what it means 👇

Conversations and bots

In this area, we either have a conversation with a bot or have a bot “eavesdrop” on a conversation.

The LiveKit and Twilio examples earlier are about striking a conversation with a bot - much like how you’d use ChatGPT’s prompts.

A bot eavesdropping to a conversation can offer assistance throughout a meeting or after the meeting -

- It can try to capture to essence of a session, turning it into a summary

- Help with note taking and writing down action items

- Figure out additional resources to share during the conversation - such as knowledge base items that reflect what a customer is complaining about to a call center agent

As I stated above, this has little to do with WebRTC itself - it takes place elsewhere in the pipeline; and to me, this is mostly an application capability.

Media compression

An interesting domain where AI is starting to be investigated and used is media compression. I’ve written about Lyra, Google’s AI enabled speech codec in the past. Lyra makes assumptions on how human speech sounds and behaves in order to send less data over the network (effectively compressing it) and letting the receiving end figure out and fill out the gaps using machine learning. Can this approach be seen as a case of generative AI? Maybe

Would investigating such approaches where the speakers are known to better compress their audio and even video makes sense?

How about the whole super resolution angle? Where you send video at resolutions of WVGA or 720p and then having the decoder scale them up to 1080p or 4K, losing little in the process. We’re generating data out of thin air, though probably not in the “classic” sense of generative AI.

I’d also argue that if you know the initial raw content was generated using generative AI, there might be a better way in which the data can be compressed and sent at lower bitrates. Is that something worth pursuing or investigating? I don’t know.

Media processing

Similar to how we can have AI based codecs such as Lyra, we can also use AI algorithms to improve quality - better packet loss concealment that learns the speech patterns in real time and then mimics them when there’s packet loss. This is what Google is doing with their WaveNetEQ, something I mentioned in my WebRTC unbundling article from 2020.

Here again, the main question is how much of this is generative AI versus simply AI - and does that even matter?

Is the future of WebRTC generative (AI)?

ChatGPT and other generative AI services are growing and evolving rapidly. While WebRTC isn’t directly linked to this trend, it certainly is affected by it:

- Applications will need to figure out how (and why) to incorporate generative AI with WebRTC as part of what they offer

- Algorithms and codecs in WebRTC are evolving with the assistance of AI (generative or otherwise)

Like any other person and business out there, you too should see if and how does generative AI affects your own plans.