WebRTC Recording? Definitely server side. But maybe client side.

👉 There’s a more recent article I wrote about WebRTC recording you may want to check out as well

This article is again taken partially from one of the lessons in my WebRTC Architecture Course. There, it is given in greater detail, and is recorded.

Recording is obviously not part of what WebRTC does. WebRTC offers the means to send media, but little more (which is just as it should be). If you want to record, you’ll need to take matters into your own hands.

Generally speaking, there are 3 different mechanisms that can be used to record:

- Server side recording

- Client side recording

- Media forwarding

Let’s review them all and see where that leads us.

#1 – Server side recording of WebRTC

There are 4 types of WebRTC servers. If we want to do server-side recording in WebRTC, then we need to include a media server in our solution that will be used for recording.

This is the technique I usually suggest developers to use. Somehow, it fits best in most cases (though not always).

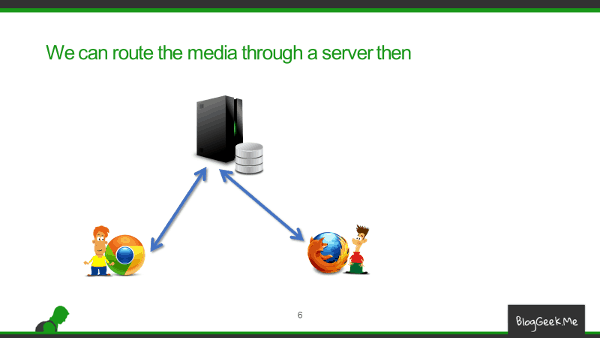

What we do in server-side recording is route our media via a media server instead of directly between the browsers. This isn’t TURN relay – a TURN relay doesn’t get to “see” what’s inside the packets as they are encrypted end-to-end. What we do is terminate the WebRTC session at the server on both sides of the call – route the media via the server and at the same time send the decoded media to post processing and recording.

What do I mean by post processing?

- We might want to mix the inputs from all participants and combine it all to a single media file

- We might want to lower the filesize that we end up storing

- Change format (and maybe the codecs?), to prepare it for playback in other types of devices and mediums

There are many things that factor in to a recording decision besides just saying “I want to record WebRTC”.

If I had to put pros vs cons for server side media recording in WebRTC, I’d probably get to this kind of a table:

| + | – |

|---|---|

| No change in client-side requirements | Another server in the infrastructure |

| No assumptions on client-side capabilities or behavior | Lots of bandwidth (and processing) |

| Can fit resulting recording to whatever medium and quality level necessary | Now we must route media |

#2- Client side recording of WebRTC

In many cases, developers will shy away from server-side recording, trying to solve the world’s problem on the client-side. I guess it is partially because many WebRTC developers tend to be Java Script coders and not full stack developers who know how to run complex backends. After all, putting up a media server comes with its own set of headaches and costs.

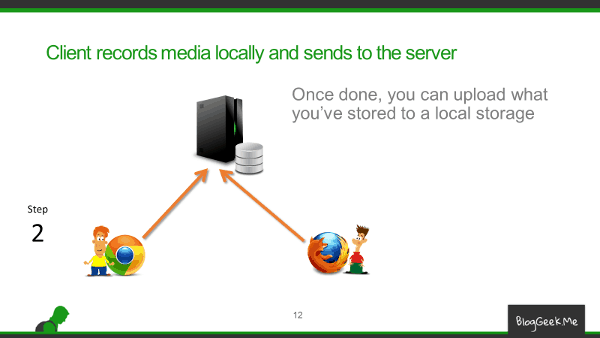

So the basics of client-side recording leans towards the following basic flow:

We first record stuff locally – WebRTC allows that.

And then we upload what we recorded to the server. Here’ we don’t really use WebRTC – just pure file upload.

Great on paper, somewhat less in reality. Why? There are a few interesting challenges when you record locally on machine you don’t know or control, via a browser:

- Do you even know how much available storage do you have to use for the recording? Will it be enough for that full hour session you planned to do for your e-learning service?

- And now that the session is done and you’re uploading a Gb of a file. Is the user just going to sit there and wait without closing his browser or the tab that is uploading the recording?

- Where and what do you record? If both sides record, then how do you synchronize the recordings?

It all leads to the fact that at the end of the day, client side recording isn’t something you can use. Unless the recording is short (a few minutes) or you have complete control over the browser environment (and even then I would probably not recommend it).

There are things you can do to mitigate some of these issues too. Like upload fragments of the recording every few seconds or minutes throughout the session, or even do it in parallel to the session continuously. But somehow, they tend not to work that well and are quite sensitive.

Want the pros and cons of client side recording? Here you go:

| + | – |

|---|---|

| No need to add a media server to the media flow | Client side logic is complex and quite dependent on the use case |

| Requires more on the uplink of the user – or more wait time at the end of the session | |

| Need to know client’s device and behavior in advance |

#3 – Media forwarding

This is a lesser known technique – or at least something I haven’re really seen in the wild. It is here, because the alternative is possible to use.

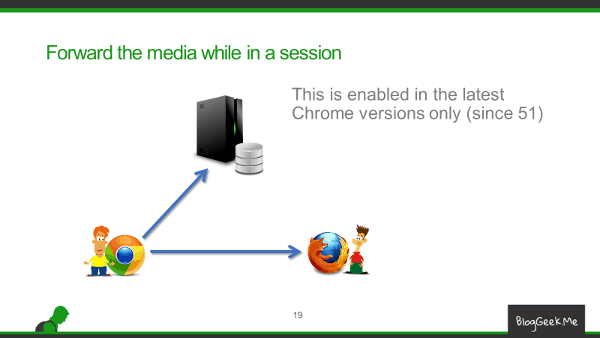

The idea behind this one is that you don’t get to record locally, but you don’t get to route media via a server either.

What is done here, is that media is forwarded by one or both of participants to a recording server.

The latest releases of Chrome allows to forward incoming peer connection media, making this possible.

This is what I can say further about this specific alternative:

| + | – |

|---|---|

| No need to add a media server into the flow – just as an additional external recording server | Requires twice the uplink or more |

| Do you want to be the first to try this technique? |

Things to remember when recording WebRTC

Recording doesn’t end with how you record media.

There’s meta data to treat (record, playback, sync, etc).

And then there’s the playback part – where, how, when, etc.

There are also security issues to deal with and think about – both on the recording end and on the playback side.

These are covered in a bit more detail in the course.

What’s next?

If you are going to record, start by leaning towards server side recording.

Sit down and list all of your requirements for recording, archiving and playback – they are interconnected. Then start finding the solution that will fit your needs.

And if you feel that you still have gaps there, then why not enroll to the Advanced WebRTC Architecture course?

Btw, current media recording functionality in browsers don’t work with RTP stream, it gets data from renderer and encodes it one more time. I believe it should affects the quality of the result.

Alexey,

Thanks. I believe there’s also a forwarder, where you can take inputs from an incoming peer connection and just forward them elsewhere.

I still don’t recommend taking that route anyway.

Can we not use Canvas element for media forwarding in Firefox?

Not sure as I haven’t tried it out Aswath.

I remember Firefox doing stuff with Canvas elements and being able to encode them and send them out though.

We have been using the forwarding method for audio streams from each participant for some time now. It is quite flexible and allows separation of conference concerns from media acquisition and processing.

We are about about to refactor to mix the audio at one primary end, with known performance, and then send a single steam to the server.

Warren,

That’s quite interesting. If you can, please expand on it a bit.

I tried several html5 recording solutions on a production site, but without apple and ie webRTC support you have more disappointed user than you can imagine. atm server recording is your friend, but im looking forward to webrtc implementation in 2017…

Playing arround with localStorage,but does not seem to be working. 🙁

Daniel – thanks for sharing.

What I don’t understand is how server side recording gets around Safari and IE support…

your articles miss code and small details – this make them and your course not real

Dan,

The course isn’t meant to help people code. There’s enough good references for that floating around out there.

People who come to me usually need to decide what to code – what to pick as the tools they will be using and why should they pick one framework over another. To be able to do these things, exact code and API has less value than understanding the bigger picture and how things combine.

The course may well not fit your needs.

Any tutorial for Media forwarding? Now I have some problems with video recording in the client, with long session gave me some memory leaks

Look at this sample: https://webrtc.github.io/samples/src/content/peerconnection/multiple-relay/

My general suggestion would be to focus on a server side solution and not forwarding.

Thanks!

For your suggestion, Do you now any example any server side solution?

Kurento, Janus and Jitsi all have the ability to also record. Each with its own strengths and limitations.

Hi, thanks for this nice summary. I looked at Kurento, Janus, Jitsi quickly. At a first glance, it seems Kurento is the quickest and easiest to get up and running for reimplementing a WebRTC call that was peer-to-peer, into a WebRTC call that goes through the media server so it can be recorded. I was wondering if you could elaborate on the strengths and weaknesses of the alternatives of Janus and Jitsi.

Marcus,

There are different reasons to select different media servers, and I go over these in detail in my online course.

Two quick things that come to mind here:

1. This is also a religion of sorts. People tend to stick with what they know and like

2. Kurento is great, but it is practically unchanged for a year now. This makes it a risky project at the moment, although there are some positive signs of movement lately

Has anyone had any luck getting Jitsi recording to work?

You should probably direct this question to one of the Jitsi mailing lists: https://jitsi.org/Development/MailingLists

Is a TURN/STUN server required if WebRTC is used only to capture audio from a hosts microphone and transfer it to a database via a media server?

From what I’ve researched it doesn’t seem to be needed but I have found it really difficult to get concrete information on using WebRTC to record audio.

kt,

It depends.

If you plan on recording on the client side, without really using the PeerConnection, sending the recorded media over a websocket or in an HTTP message – then you have no need for TURN/STUN servers. These are only required if you make use of the PeerConnection and plan to record on the server side.

Tsahi

Great Thanks

Keelin

I am interested to know in which peer connection audio stream flowing from client to server. i need to know how can i get to know i mean by looking on which parameter on server side audio drop can be identified. Some times in session we cant hear participant. Please Reply.

Nilesh,

I am not sure I understand the question. In most cases, a single peer connection will handle both the audio and the video will be sent and received together. In most cases, on the same SRTP connection.

If the issues you are having are connectivity ones, then first look at your TURN configuration and try understanding your webrtc-internals better. This should be of help: https://bloggeek.me/webrtc-internals/

I am facing issues while recording the stream that I am receiving from a Peer webrtc connection. Once I stop recording and try to play it I only see the last frame. I am using MediaRecorder API. Recording of local stream from getuser media seems to work fine. Any idea what may be the issue ?

Ravi,

Such questions are better addressed in discuss-webrtc (https://groups.google.com/forum/?nomobile=true#!forum/discuss-webrtc). I suggest you publish it there.

How can I record a video call using android? I referred so many solution but I didn’t get proper way to record, please help me .

With Android, I’d do recording on the server side (if using the browser), and that solves your question.

Inside an app, well… you have access to all the data so you’ll need to cobble up a solution for that (and even then, I’d probably go for server-side recording).

You are suggesting server side recording using JITSI or Kurento, but both solution have huge performance issues. Basically you are limited to record one session at time.

It all depends. First on how you record – separated streams or mixed stream.

And on where you do the processing. In the end, mixed recording is CPU intensive and will be expensive on matter what you do. Handling this on the client side is usually impossible due to a lot of constrains and the fact that you have no control over the environment.

Thank you for the article!

Do I need TURN server, when I use #1 Server side recording?

I do not understand what is it for since I forward media stream through media server as TURN server does…

You might need it. Read here: https://bloggeek.me/webrtc-turn/